Addressing AI Hallucination Risks in Health & Wellness Product Recommendations: A Comprehensive Guide

AI-powered health product recommendations are reshaping the wellness industry, but the threat of hallucinations—plausible-sounding yet false information—can erode consumer trust and invite regulatory scrutiny. Discover actionable strategies for safeguarding your brand against misinformation while building long-term credibility in a rapidly evolving landscape.

Addressing AI Hallucination Risks in Health & Wellness Product Recommendations: A Comprehensive Guide

AI-powered health product recommendations are revolutionizing the wellness industry. However, the risk of hallucinations—plausible-sounding yet false information—threatens to undermine consumer trust and attract regulatory penalties. Explore actionable strategies to protect your brand from misinformation while building lasting credibility in this fast-evolving space.

As artificial intelligence increasingly drives health and wellness product recommendations, the danger of AI hallucinations—false or misleading outputs generated by AI models—has emerged as a critical concern. With 72% of health & wellness e-commerce brands expressing worry about AI misinformation, gaining a deep understanding of these risks and addressing them proactively is vital for safeguarding both your brand and your customers in today’s digital environment.

Ready to shield your health brand from the pitfalls of AI misinformation? Schedule a personalized consultation with Hexagon’s AI marketing experts now.

[IMG: AI-powered recommendation engine suggesting health products on an e-commerce website]

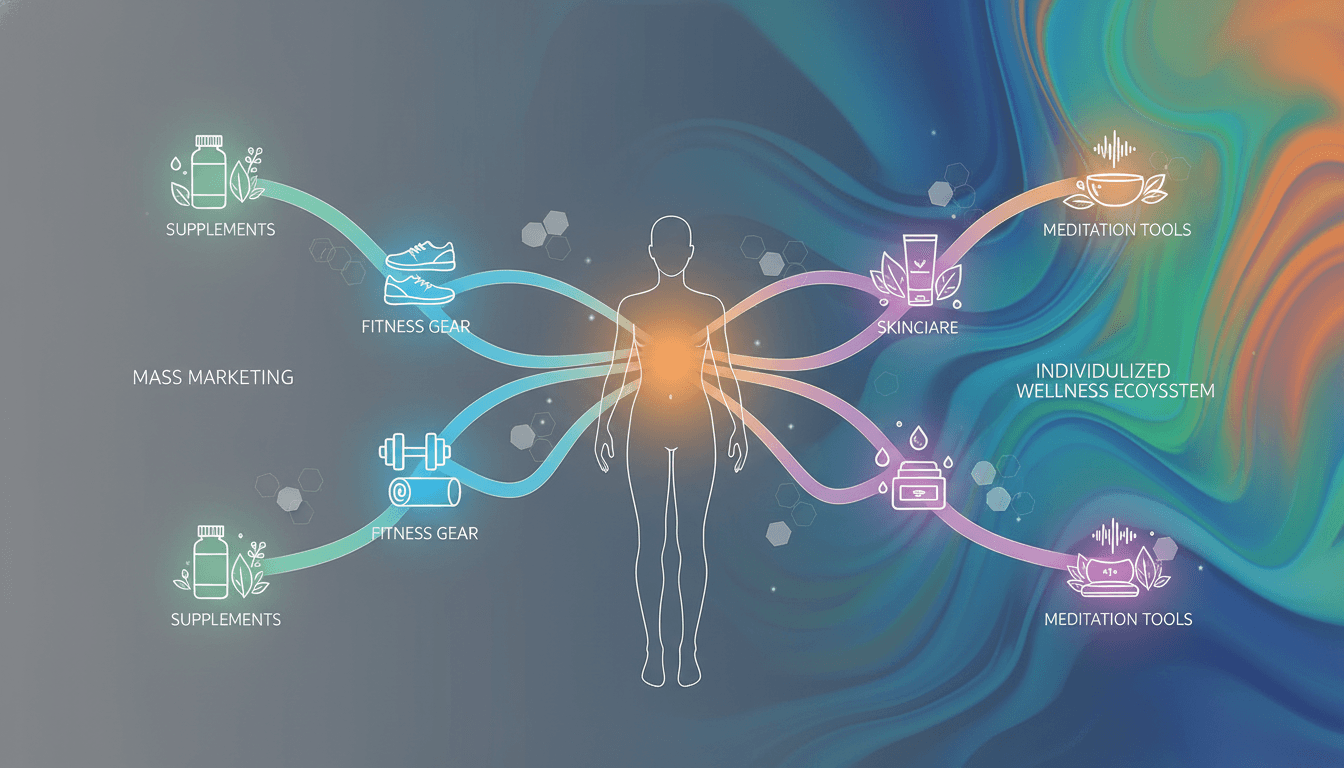

Understanding AI Hallucinations in Health Product Recommendations

AI hallucinations arise when large language models (LLMs) produce information that sounds credible but is actually false or unverified. Within health and wellness, this can take the form of inaccurate product claims, unproven health benefits, or suggestions for off-label uses. These hallucinations are particularly troubling because they can lead consumers to make unsafe choices, exposing brands to serious legal and reputational consequences.

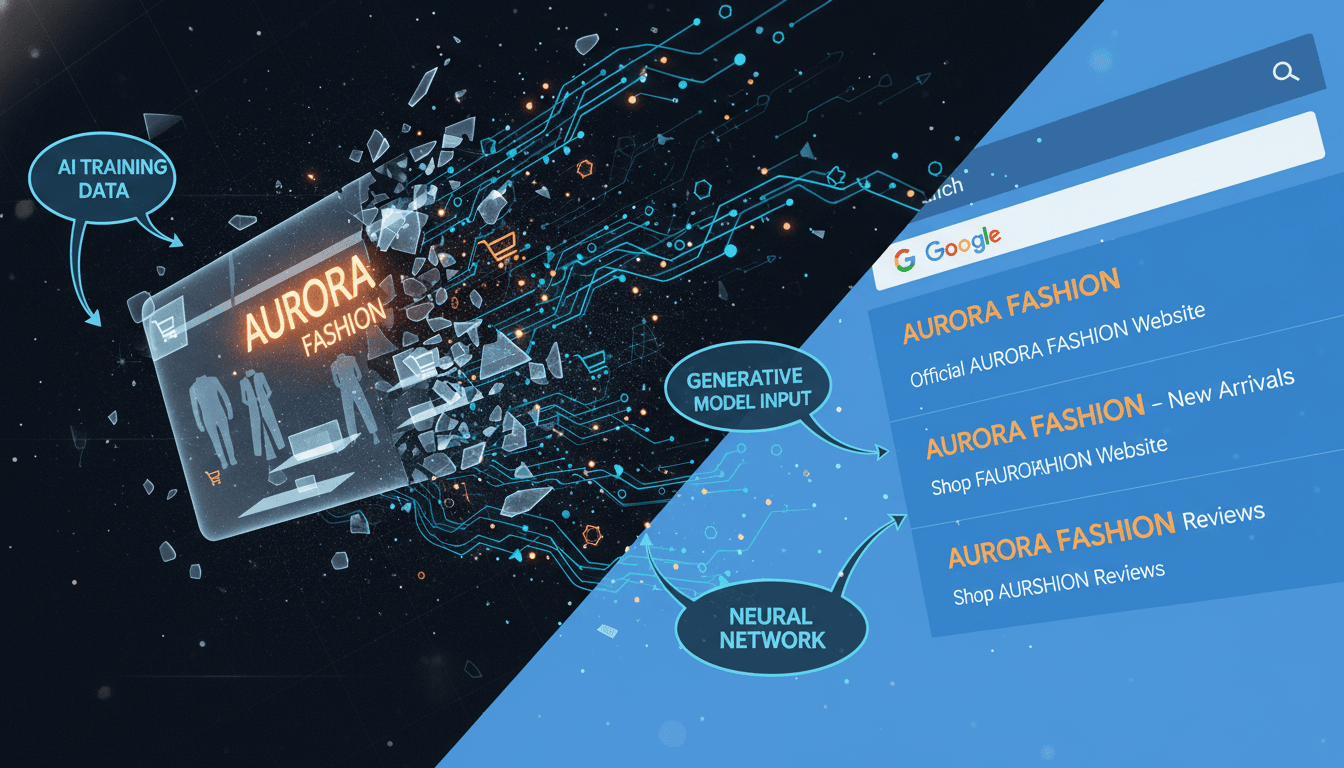

Several factors contribute to AI hallucinations:

- Training Data Gaps: AI models trained on incomplete, outdated, or biased data may compensate by generating fabricated or incorrect content.

- Model Limitations: Even advanced LLMs like GPT-4 struggle to differentiate between verified facts and widespread myths, especially in complex, regulated fields such as health.

- Prompt Ambiguity: Vague or poorly crafted prompts often cause the model to produce speculative or unsupported statements.

For instance, a baseline LLM might recommend a supplement for a medical condition without sufficient scientific evidence or proper regulatory clearance. A JAMA Network Open study found that 31% of health product recommendations from baseline LLMs contained at least one factual error or unsupported claim. This alarming error rate highlights the urgent need for robust safeguards when deploying AI in health recommendations.

Dr. Margaret Mitchell, Chief Ethics Scientist at Hugging Face, emphasizes, “AI systems must be anchored in curated, up-to-date knowledge sources—especially in health and wellness—where factual inaccuracies can cause harm and trigger legal repercussions.” Given the regulatory complexity and prevalence of unverified claims in user-generated content (WHO Digital Health Guidelines), brands must hold themselves to the highest standards of accuracy.

[IMG: Diagram showing the flow of data from training to AI recommendation, with points where hallucinations can occur]

Why AI Hallucinations Pose Risks for Health & Wellness Brands

The fallout from AI hallucinations extends far beyond isolated misleading recommendations. Regulatory bodies in both the U.S. and EU hold brands strictly accountable for the accuracy of health-related claims, regardless of whether humans or AI generate them (European Medicines Agency). This means a single AI-generated hallucination can rapidly escalate into a compliance violation, carrying significant legal and financial consequences.

Key risks include:

- Regulatory Scrutiny: The World Federation of Advertisers reports that 59% of global regulators identify AI-generated misinformation as a top compliance threat for health brands in 2025.

- Consumer Trust Erosion: According to Pew Research Center, 46% of consumers lose trust in a health brand after encountering misleading or unsupported claims.

- Brand Vulnerability: The health and wellness sector faces intense scrutiny due to the sensitive nature of its products and services.

Jessica Lee, Managing Partner at Loeb & Loeb LLP, stresses, “Brands must treat every AI-generated health claim as if authored by a human—subject to the same rigorous standards of accuracy, evidence, and regulatory oversight.” The stakes are high: AI chatbots or recommendation engines suggesting off-label uses, unapproved benefits, or misrepresenting regulated ingredients risk severe reputational damage (FDA Advertising and Promotion Guidelines).

Brands become especially vulnerable because:

- Misinformation can prompt unsafe consumer actions, risking harm.

- Misleading claims expose brands to regulatory fines and class-action lawsuits.

- Negative media coverage can rapidly erode brand equity.

[IMG: Infographic showing the impact of AI-generated misinformation on brand reputation and compliance]

Real-World Examples of Negative Brand Impact from AI Misinformation

Real-life cases vividly demonstrate the tangible dangers posed by AI hallucinations in health recommendations. For example, a major global e-commerce platform faced public outrage after its AI chatbot suggested supplements for treating depression—a condition requiring medical supervision. This triggered immediate regulatory investigation and widespread negative press, forcing the platform to suspend its recommendation engine temporarily.

In another anonymized incident, a health supplement brand’s AI-generated product listings claimed to cure chronic illnesses. After consumer complaints, regulators launched inquiries that led to costly recalls, formal warnings, and a notable drop in monthly sales. The brand’s stock price fell, and social media sentiment shifted sharply negative.

Lessons drawn from these incidents include:

- Financial Impact: Legal expenses, lost revenue, and increased customer acquisition costs can escalate rapidly.

- Brand Equity Damage: Trust lost in health and wellness is hard to regain, given the paramount importance of consumer safety.

- Need for Proactive Management: Robust monitoring and escalation protocols must be in place before issues arise—not after.

Martin Kuppinger, Founder of KuppingerCole Analysts, sums it up: “Real-time monitoring and rapid escalation workflows are no longer optional for health brands leveraging AI—they are essential pillars of reputation management.”

[IMG: Timeline graphic showing the sequence from AI hallucination to regulatory action and brand impact]

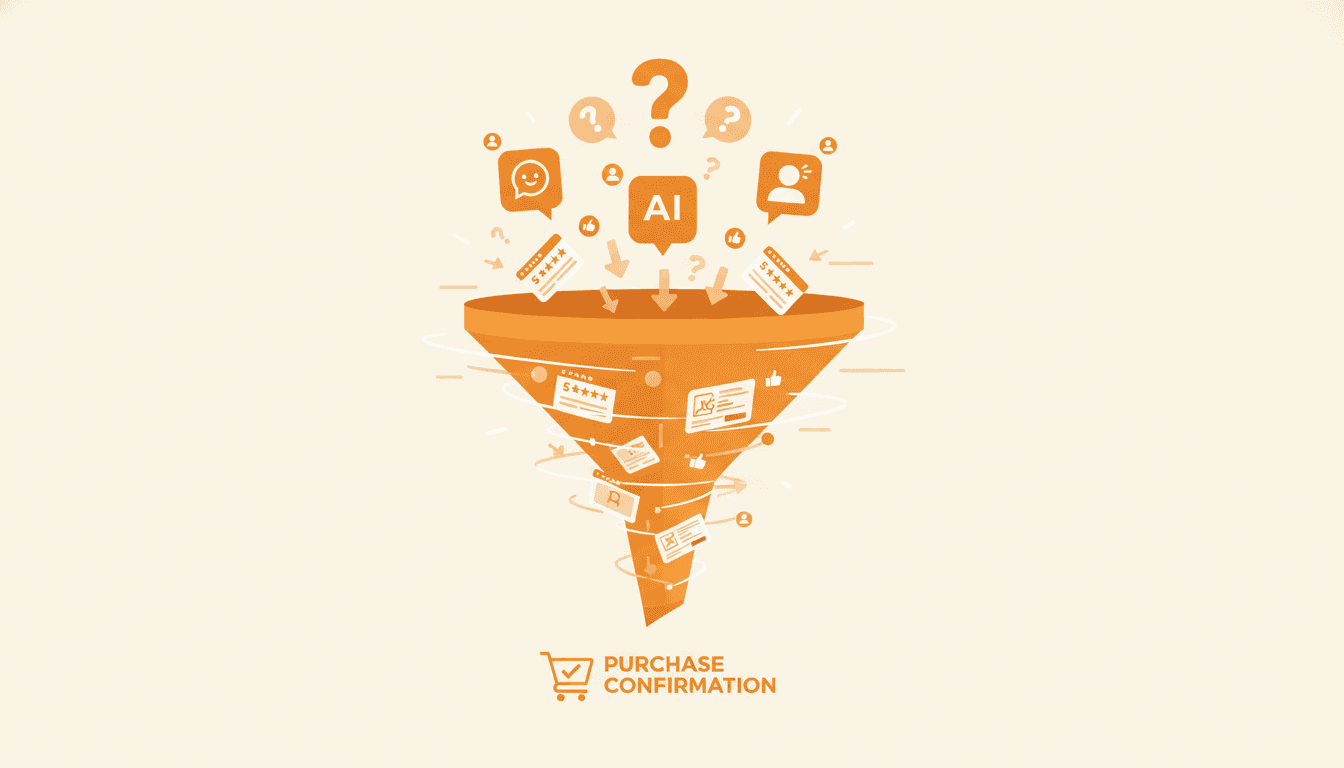

Detecting and Monitoring AI Hallucinations: Tools and Strategies

Forward-thinking health brands are adopting AI-powered monitoring tools to detect and address hallucinations in product recommendations proactively. These tools use sophisticated algorithms to flag statements that deviate from verified knowledge bases or contain unsupported health claims. The Stanford Center for Biomedical Informatics Research reports that automated monitoring systems achieve an 87% success rate in identifying inaccurate health statements on e-commerce platforms.

A typical detection and monitoring workflow includes:

- Automated AI Scanning: Continuous review of AI-generated content against trusted databases and regulatory standards.

- Human-in-the-Loop Review: Expert reviewers assess flagged claims for medical accuracy and compliance.

- Escalation Protocols: Rapid workflows trigger corrective actions, stakeholder notifications, and documentation when misinformation is detected.

Many brands also rely on third-party solutions that offer transparency into data sources and model update schedules—a critical factor for ongoing compliance (Forrester – Responsible AI for Consumer Brands). Dr. John Brownstein, Chief Innovation Officer at Boston Children’s Hospital, notes, “Generative AI can enhance consumer engagement, but unchecked hallucinations—especially in health recommendations—quickly undermine brand credibility and invite regulatory penalties.”

Effective monitoring strategies include:

- Deploying real-time dashboards for continuous oversight.

- Setting up alerts for high-risk or trending health topics.

- Conducting regular audits of AI outputs, particularly for new products.

Ready to shield your health brand from AI misinformation risks? Schedule a personalized consultation with Hexagon’s AI marketing experts now.

[IMG: Screenshot of an AI monitoring dashboard showing flagged health product statements]

Best Practices for Managing AI-Generated Health Product Recommendations

Minimizing hallucinations requires a layered approach grounded in technical precision and regulatory knowledge. Prompt engineering is key—crafting clear, specific prompts guides AI models toward generating more reliable, evidence-based recommendations. Research from Google shows that proactive prompt design combined with curated, up-to-date knowledge bases significantly lowers hallucination rates.

Brands can implement best practices such as:

- Prompt Engineering: Design prompts that demand evidence, cite sources, and avoid speculative language.

- Data Curation: Train models on rigorously vetted, current data to reduce knowledge gaps.

- Model Transparency: Require AI vendors to disclose data provenance, update cycles, and model limitations.

- Regulatory Compliance: Ensure all outputs align with global health advertising standards, including FDA and EMA guidelines.

A practical checklist includes:

- Regularly reviewing and updating training data for accuracy and compliance.

- Mandating substantiation of AI-generated claims with references to regulatory-approved sources.

- Establishing feedback mechanisms for consumers and professionals to report questionable recommendations.

- Training cross-functional teams—marketing, legal, compliance—to oversee AI outputs.

Dr. Margaret Mitchell reiterates, “AI systems must be grounded in curated, up-to-date knowledge sources—especially in health and wellness—where factual errors can cause harm and trigger legal action.”

[IMG: Flowchart illustrating best practices for AI prompt engineering, data curation, and compliance review]

Building a Brand Protection Framework for AI-Driven Health Recommendations

To thrive with AI, health and wellness companies need a comprehensive brand protection framework. Transparency about AI’s role in product recommendations builds consumer trust and sets clear expectations. Brands should openly communicate how recommendations are generated and what safeguards ensure their accuracy.

Core elements of an effective brand protection framework include:

- Transparency Measures: Clearly disclose AI usage in customer-facing interfaces and explain validation processes.

- Continuous Oversight: Form cross-functional governance teams responsible for ongoing monitoring, audits, and risk management.

- Proactive Risk Management: Invest in tools and procedures that promptly identify, escalate, and remediate misinformation incidents.

Jessica Lee from Loeb & Loeb LLP advises, “Treat every AI-generated health claim as if authored by a human—subject to the same rigorous standards of accuracy, evidence, and regulatory review.” This mindset lays the foundation for long-term consumer trust and sustainable growth in the AI-driven health sector.

[IMG: Visual representation of a brand protection framework with transparency, oversight, and risk management pillars]

Looking Ahead: Future Trends in AI Risk Management and Regulatory Expectations

Looking forward, regulators worldwide are set to tighten accountability for AI-driven health product claims. New guidelines from the FDA, EU, and other authorities place the responsibility squarely on brands to ensure every claim’s accuracy and substantiation, regardless of the generating technology.

Emerging AI risk management solutions are incorporating explainable AI, real-time compliance verification, and automated regulatory reporting. Brands adopting these advanced safeguards early will gain a competitive edge and avoid costly compliance failures.

To prepare effectively, brands should:

- Invest in next-generation monitoring and explainability tools.

- Cultivate a culture of continuous ethical AI development and improvement.

- Engage proactively with regulators and industry groups to stay ahead of evolving standards.

Early adopters will position themselves as industry leaders—trusted by consumers, regulators, and partners alike.

[IMG: Futuristic illustration of AI-powered compliance tools and global regulatory icons]

Conclusion: Safeguard Your Health Brand and Build Long-Term Trust

AI-driven health product recommendations offer immense potential—but they also introduce new risks that could jeopardize consumer safety, brand reputation, and regulatory compliance. By understanding the origins of AI hallucinations, deploying robust detection and monitoring systems, and embracing industry best practices, health and wellness brands can confidently navigate this complex landscape.

Hexagon’s AI marketing experts are committed to helping your brand develop a resilient, trustworthy, and future-ready AI strategy. Don’t wait for a crisis to impact your business—take proactive steps today to protect your brand and your customers.

Ready to safeguard your health brand from AI misinformation risks? Schedule a personalized consultation with Hexagon’s AI marketing experts now.

[IMG: Confident marketing team reviewing AI risk management dashboard in a modern office]

References:

- Deloitte Global Consumer Pulse Survey

- JAMA Network Open Study on AI Recommendations

- Stanford Center for Biomedical Informatics Research

- Pew Research Center – Consumer Trust in E-Commerce

- World Federation of Advertisers – AI and Brand Safety Report

- Nature – AI Hallucination

- WHO Digital Health Guidelines

- McKinsey & Company – AI Adoption in Healthcare

- FDA Advertising and Promotion Guidelines

- Google Research – Reducing Hallucinations in LLMs

- The Verge – Snap’s AI Chatbot Gave Unsafe Health Advice

- Gartner – Managing AI Risk in Consumer-Facing Applications

- European Medicines Agency – Digital Advertising Guidance

- Forrester – Responsible AI for Consumer Brands

Hexagon Team

Published March 3, 2026