The Basics of Multimodal AI Search and Its Impact on E-Commerce Product Discovery

How multimodal AI search is revolutionizing e-commerce product discovery with text, image, and voice inputs—plus actionable strategies to future-proof your brand in an AI-driven marketplace.

The Basics of Multimodal AI Search and Its Impact on E-Commerce Product Discovery

How multimodal AI search is revolutionizing e-commerce product discovery with text, image, and voice inputs—plus actionable strategies to future-proof your brand in an AI-driven marketplace.

In an increasingly saturated digital marketplace, shoppers demand more than just traditional keyword searches—they want fast, precise, and intuitive product discovery. With 45% of AI-powered searches now combining text and images (Gartner), and over half of online consumers turning to voice queries, grasping the power of multimodal AI search is no longer optional. It’s essential for brands striving to differentiate themselves and boost conversions. This guide unpacks what multimodal AI search entails, explores its transformative effect on e-commerce product discovery, and offers practical strategies to optimize your brand for this cutting-edge technology.

Ready to future-proof your e-commerce strategy with multimodal AI search? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

Understanding Multimodal AI Search: Definition and Fundamentals

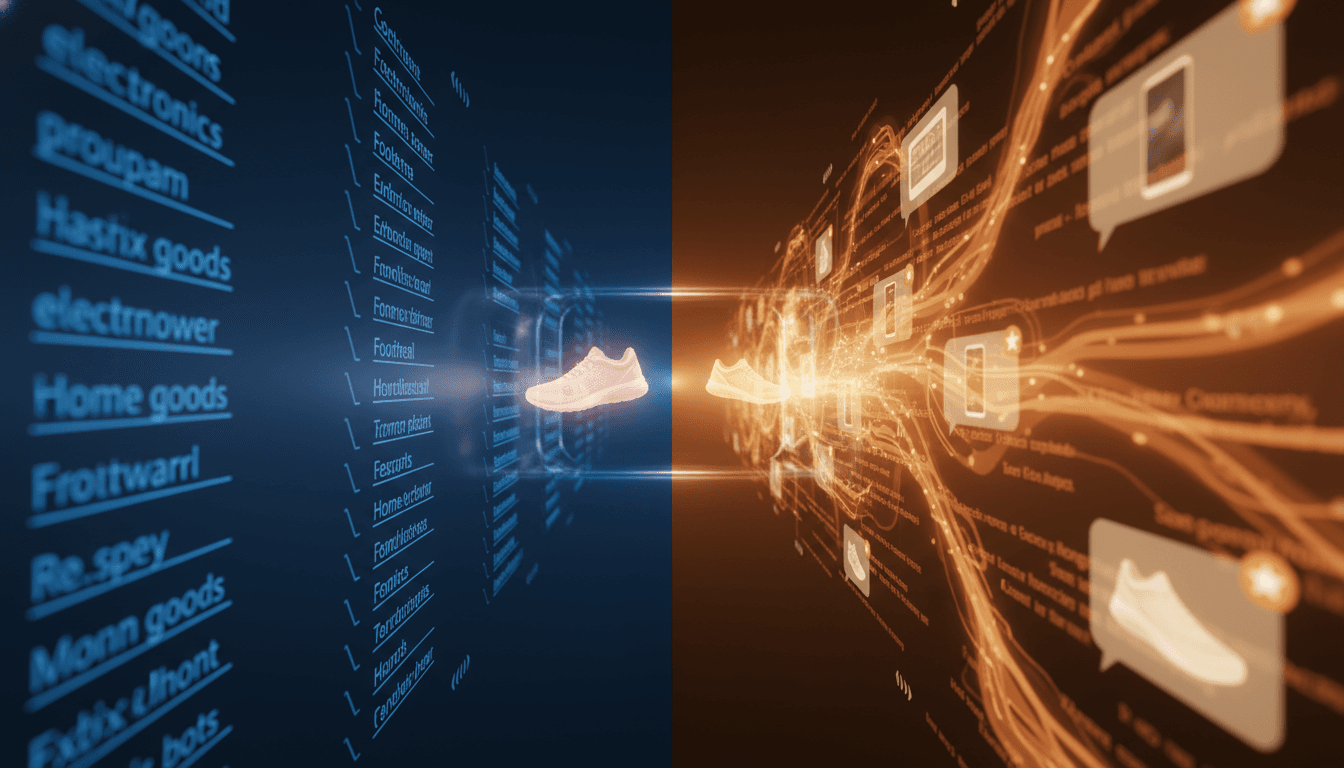

Multimodal AI search describes artificial intelligence systems capable of interpreting and integrating multiple input types—primarily text, images, and voice—to deliver highly relevant and accurate search results. Unlike traditional search engines that rely solely on keywords, multimodal AI comprehends user intent by analyzing each input modality together, enabling a richer and more natural product discovery experience.

Core components of multimodal AI search include:

- Text understanding: Parsing product descriptions, reviews, and search queries to extract semantic meaning.

- Image recognition: Using computer vision algorithms to analyze product photos, user-uploaded images, and visual details.

- Voice processing: Converting spoken queries into actionable data through speech recognition.

[IMG: Diagram illustrating multimodal AI combining text, image, and voice inputs]

At the heart of this technology are generative AI engines like Google Gemini and OpenAI’s ChatGPT. These models employ deep learning to simultaneously process and synthesize data from various modalities (MIT Technology Review). For instance, a shopper searching for “red sneakers like this” while uploading a photo and speaking the phrase expects the AI to interpret all three inputs cohesively.

According to Gartner, 45% of AI-powered searches now blend text and image inputs for product discovery. AI pioneer Andrew Ng explains, “Multimodal search technologies are bridging the gap between customers’ intent and product offerings, making personalization and relevance more achievable than ever.” This means brands can now connect with consumers in the ways they naturally think and shop—whether through visuals, spoken commands, or written descriptions.

Leading AI assistants such as Google Gemini and Anthropic Claude already support multimodal capabilities (OpenAI), enabling e-commerce platforms to offer seamless search experiences across channels. Visual search adoption has exploded, with platforms like Pinterest and Google Lens facilitating billions of visual searches monthly (Pinterest Newsroom). Voice queries are projected to surpass 50% of all mobile searches by 2025 (Comscore), further accelerating the shift toward multimodal AI.

How Multimodal AI Search Works: Behind the Technology

Behind the scenes, multimodal AI search leverages advanced models designed to process and merge different input types simultaneously. Here’s an overview of the process:

- Input capture: The system receives text queries, uploaded images, and/or voice commands.

- Preprocessing: Each input is transformed into a machine-readable format—text is tokenized, images undergo computer vision analysis, and voice is transcribed through speech recognition.

- Contextual analysis: AI models, often based on transformer architectures, extract semantic meaning and context from each modality.

- Synthesis: The system integrates insights from all modalities to build a unified understanding of the user’s intent.

- Result generation: Generative AI produces and ranks the most relevant product matches, often enhancing results with personalized recommendations.

[IMG: Flowchart of multimodal AI search process from input to result]

The power of multimodal AI lies in the synergy between natural language processing (NLP), computer vision, and speech recognition. Imagine a shopper uploading a photo of a lamp, describing it as “mid-century modern,” and asking, “Do you have this in brass?” The AI seamlessly combines visual cues, descriptive text, and spoken preferences to surface the best product options.

Key technology leaders driving multimodal innovation include:

- OpenAI: ChatGPT’s multimodal features integrate text and image inputs fluidly (OpenAI).

- Google: Gemini and Google Lens handle complex multimodal queries spanning shopping and visual search (Google AI Blog).

- Anthropic: Claude’s platform supports simultaneous processing of text, image, and voice inputs (Anthropic).

Generative engines apply deep learning to understand context across modalities, enabling nuanced and accurate product recommendations. Sundar Pichai, CEO of Google, emphasizes, “Multimodal AI search is redefining digital commerce by allowing users to find products the way they naturally think—through a combination of images, voice, and text.” This unified approach significantly reduces friction in the shopping journey, helping consumers find products that match complex or difficult-to-describe needs (Deloitte).

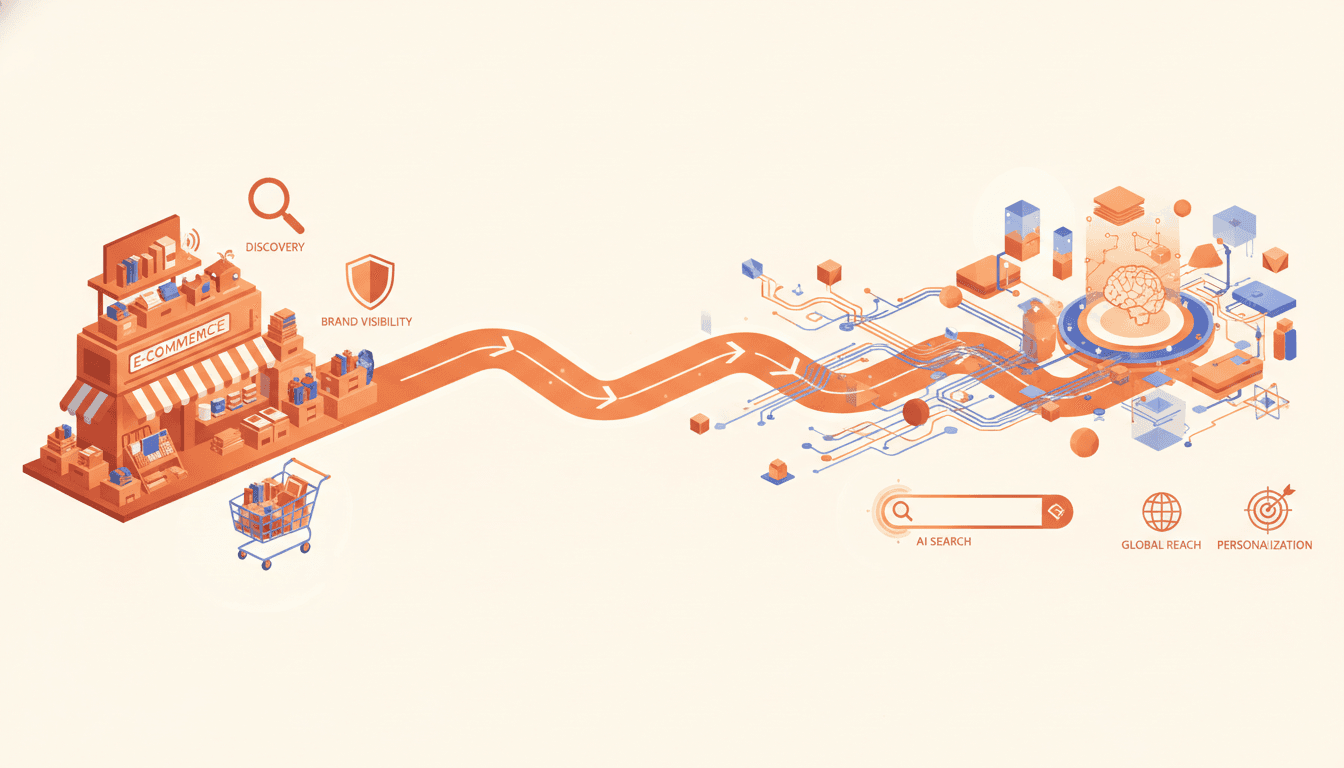

The Evolution of E-Commerce Product Discovery: From Text to Multimodal Search

For years, e-commerce search engines have relied heavily on keyword matching—a method that often misses the mark when capturing consumer intent, especially for visually-driven or nuanced queries. This shortcoming results in irrelevant search results and lost sales opportunities. The emergence of multimodal AI is reshaping how consumers discover products online.

Consumer expectations have evolved dramatically:

- Shoppers now expect to search using images, voice, and rich contextual descriptions.

- The rise of mobile devices, smart speakers, and AI assistants fuels growth in visual and voice search.

- Multimodal search empowers users to express their needs more naturally, leading to higher satisfaction.

[IMG: Timeline showing evolution from text to multimodal search in e-commerce]

The impact of this shift is tangible. Forrester Research reports a 30% increase in product discovery rates on e-commerce sites that leverage multimodal search capabilities (Forrester Research). Meanwhile, 52% of online shoppers used voice search for products in the past year (NPR/Edison Research). Retail analyst Sucharita Kodali from Forrester notes, “The future of product discovery is multimodal. E-commerce brands must adapt their content strategies to remain visible in AI-powered search engines.”

Looking forward, brands that ignore these evolving consumer behaviors risk losing visibility and market share. Multimodal AI represents more than a technological upgrade—it reflects how today’s shoppers truly want to interact, browse, and buy.

Impact of Multimodal AI Search on E-Commerce Visibility and Conversions

Multimodal AI search is already driving significant improvements in key e-commerce metrics by enhancing product relevance, personalization, and conversion rates. Here’s how:

- Enhanced relevance: By simultaneously processing images, text, and voice, AI delivers product recommendations that closely align with user intent.

- Personalization: Integrating preferences across multiple input types enables brands to craft tailored shopping experiences that boost engagement.

- Higher conversion rates: More accurate and frictionless search results translate into increased add-to-cart actions and purchases.

[IMG: Graph showing improved conversion rates after implementing multimodal AI search]

For instance, 62% of Gen Z consumers prefer brands that offer visual search features for browsing and shopping (Business of Apps). Retailers are responding accordingly: 67% plan to invest in AI-powered multimodal search by 2025 (Capgemini Research Institute). Brands that optimize for multimodal queries increase their likelihood of being recommended by AI assistants, directly boosting product discovery and conversions (McKinsey Digital).

Multimodal search proves especially effective in visual-first categories like fashion, home décor, and electronics (Forrester Research). Satya Nadella, CEO of Microsoft, highlights, “Brands that embrace multimodal search now will have a significant competitive advantage as AI-driven discovery becomes the standard.” This creates a virtuous cycle: superior user experiences foster loyalty, which increases lifetime value and brand advocacy.

Optimizing Your Brand for Image, Text, and Voice AI Queries

To thrive in the multimodal AI era, brands must rethink how they present and structure product information. Here’s how to prepare your catalog for next-generation search engines:

1. Enrich Product Data with High-Quality Images and Descriptive Text

- Use multiple high-resolution images showing products from various angles.

- Include lifestyle and contextual shots to provide visual cues that AI can interpret.

- Write clear, detailed product descriptions incorporating relevant keywords and attributes.

[IMG: Example of an optimized product listing with rich images and text]

2. Incorporate Voice Search Optimization Techniques

- Anticipate natural language queries and conversational phrases in product titles and descriptions.

- Use question-and-answer formats in FAQ sections to capture common voice search queries.

- Ensure your mobile site loads quickly and is accessible to support voice-driven searches.

3. Leverage Structured Data and AI-Friendly Metadata

- Implement schema markup (such as Product Schema) to help AI engines accurately interpret product details.

- Tag images with descriptive alt text and relevant metadata.

- Organize categories and filters to align with how users search visually and verbally.

Additional tips for preparing listings for generative AI engines:

- Avoid jargon and ambiguous terms; use language that mirrors how customers describe your products.

- Regularly audit and update product data to reflect current trends and seasonal keywords.

- Test search functionality across platforms that use multimodal AI to identify and address gaps.

For example, Shopify Plus recommends optimizing for multimodal AI by providing high-quality images, detailed descriptions, and structured metadata (Shopify Plus). This comprehensive approach enhances both discoverability and conversion.

4. Align Content Strategy with Multimodal Search Behaviors

- Analyze how your audience interacts with visual and voice search on your site.

- Create video demos and tutorials that AI search engines can index and surface.

- Encourage user-generated content, like customer photos and reviews, to enrich your data.

Brands that consistently optimize for all three modalities—text, image, and voice—will be best positioned for recommendations by AI-powered assistants and shopping platforms.

Real-World Examples and Technology Leaders in Multimodal AI Search

A growing number of technology pioneers and e-commerce brands are showcasing the power of multimodal AI search. Here’s who’s leading the charge:

Leading Platforms and Tools

- Google Lens: Enables users to search visually and combine queries with text, powering billions of searches monthly (Google Lens).

- Pinterest Visual Search: Drives discovery through AI-powered image recognition and recommendation engines (Pinterest Newsroom).

- OpenAI ChatGPT and Gemini: Facilitate seamless multimodal interactions for shopping assistance and product recommendations.

[IMG: Screenshots of leading multimodal search tools in action]

E-Commerce Brand Case Studies

- Fashion Retailers: Brands like ASOS and Zara have integrated visual and voice search, resulting in notable lifts in product discovery and conversions (Forrester Research).

- Home Décor Platforms: Wayfair uses AI to let users search by room photos and spoken preferences, creating highly personalized shopping experiences.

How Hexagon Empowers Brands

Hexagon leads in AI-powered marketing, helping e-commerce businesses harness multimodal search to maximize impact. With deep expertise in generative AI, structured data, and e-commerce optimization, Hexagon enables brands to:

- Audit and enhance product data for AI compatibility.

- Integrate cutting-edge multimodal search tools and APIs.

- Develop content strategies tailored for next-generation discovery engines.

Real-world results demonstrate that Hexagon’s solutions boost product visibility and conversion rates. As the market evolves, Hexagon’s consultative approach ensures brands stay ahead of the curve.

Future Trends: Personalization, Recommendation Engines, and Multimodal Interaction

The future of e-commerce search lies in rapid advancements in AI-driven personalization and recommendation systems. Multimodal AI will continue transforming how brands connect with, engage, and convert shoppers.

Emerging trends include:

- Hyper-personalization: AI will utilize real-time data from text, image, and voice interactions to deliver individualized product recommendations.

- Contextual recommendation engines: Deep learning models will surface products based on subtle user behavior, preferences, and multimodal cues.

- Conversational commerce: Virtual shopping assistants will guide users through discovery using natural language, visuals, and voice.

[IMG: Conceptual graphic of a personalized AI-powered shopping experience]

Predictions show AI adoption in e-commerce accelerating. By 2025, voice search queries will represent over half of all mobile searches (Comscore), and 67% of retailers are preparing to invest in multimodal search solutions (Capgemini Research Institute). Gartner emphasizes that personalization in multimodal search improves by integrating user preferences across all input types.

To stay competitive, brands must commit to ongoing optimization and experimentation. Ignoring these changes risks losing visibility among consumers and AI-powered recommendation engines (Search Engine Journal). Success will belong to those who embrace new modalities, monitor evolving behaviors, and collaborate with experts to lead in this dynamic landscape.

Conclusion: Embrace the Multimodal AI Revolution

The rise of multimodal AI search marks a pivotal shift in e-commerce product discovery. By mastering and optimizing for text, image, and voice queries, brands unlock greater product visibility, deeper personalization, and higher conversion rates. The evidence is clear: consumers crave richer, more intuitive search experiences, and leading retailers are already investing in multimodal capabilities to meet this demand.

For brands, the time to act is now—enrich your product data, align content strategies, and leverage the right AI technologies. Hexagon’s AI marketing expertise can guide you through this evolving landscape, future-proofing your brand for the next era of digital commerce.

Ready to future-proof your e-commerce strategy with multimodal AI search? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

[IMG: Call-to-action graphic inviting readers to schedule a consultation with Hexagon]

Hexagon empowers brands to harness the latest in AI-powered marketing. Stay ahead—optimize for multimodal search and win in tomorrow’s digital marketplace.

Hexagon Team

Published April 15, 2026