Why AI Hallucinations Occur in E-Commerce Search and How Brands Can Protect Their Reputation

Inaccurate AI-generated product recommendations are eroding consumer trust and damaging brand reputations in e-commerce. Discover why AI hallucinations happen, the real risks for your business, and the most effective strategies to protect your brand in this comprehensive guide.

Why AI Hallucinations Occur in E-Commerce Search and How Brands Can Protect Their Reputation

Inaccurate AI-generated product recommendations are silently eroding consumer trust and damaging brand reputations across e-commerce platforms. Uncover the root causes of AI hallucinations, understand their real risks for your business, and explore the most effective strategies to safeguard your brand in this comprehensive guide.

[IMG: Frustrated customer looking at an inaccurate AI-generated product recommendation on a laptop]

In today’s rapidly evolving AI-driven e-commerce landscape, inaccurate product recommendations and search results—commonly referred to as AI hallucinations—are far more prevalent than many brands realize. With 32% of customers reporting that they have received inaccurate AI-generated product information, and nearly half admitting they would lose trust in a brand after encountering such errors, the stakes for e-commerce companies have never been higher. This guide delves into why AI hallucinations occur, the tangible damage they inflict on brand reputation, and actionable strategies brands can adopt to minimize risk while preserving customer trust.

Ready to safeguard your e-commerce brand from AI hallucinations and protect your reputation? Book a personalized strategy session with Hexagon’s AI marketing experts today.

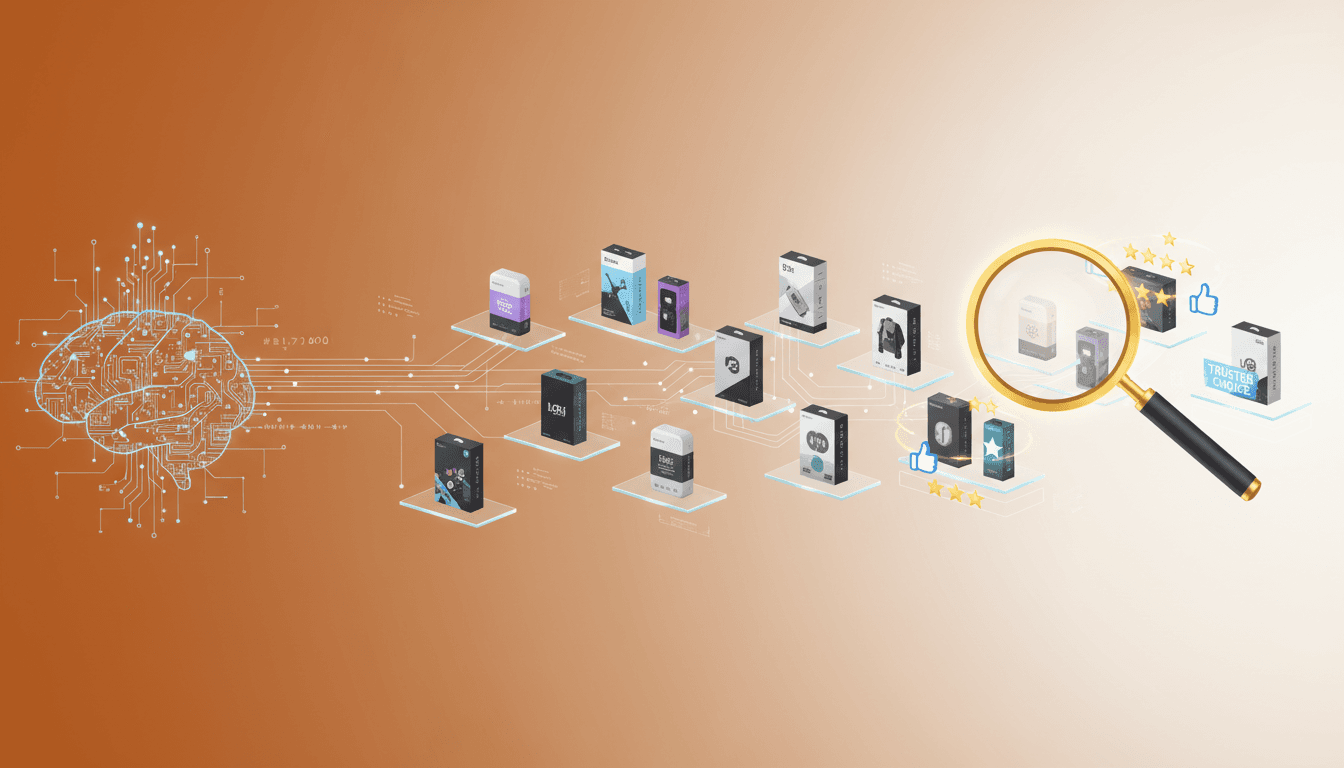

What Are AI Hallucinations and How Do They Occur in E-Commerce Search?

AI hallucinations happen when large language models (LLMs) or generative AI systems produce information that sounds plausible but is actually incorrect. These errors often stem from limitations in training data or ambiguous customer queries. In e-commerce, hallucinations typically appear as inaccurate product recommendations, fabricated features, or even entirely nonexistent items presented to shoppers. Importantly, these mistakes are not the result of malicious intent but arise from the inherent probabilistic reasoning and constraints of current AI models.

Consider a customer searching for a discontinued product: the AI might recommend a similar item, but erroneously invent specifications or attributes that don’t exist. Research from Stanford HAI estimates that major LLMs experience a 7-15% hallucination rate during real-time e-commerce product queries, underscoring the scale of this challenge Stanford HAI, Language Models in E-Commerce.

Several factors contribute to AI hallucinations in e-commerce search:

- Data Gaps: When product catalogs are incomplete or outdated, AI fills in missing details with speculative or fabricated content.

- Ambiguous Queries: Vague or unclear customer questions confuse the AI, leading to generic or inaccurate results.

- Model Limitations: Even the most sophisticated AI cannot consistently differentiate between verified and unverified product information.

Brands have reported instances where AI-powered search tools recommended discontinued products, mismatched accessories, or fabricated product features. According to McKinsey, generative AI-based recommendation engines can hallucinate prices, features, or entire products that the brand does not carry McKinsey & Company, AI in Retail. Such hallucinations not only confuse customers but can quickly escalate into serious reputational issues.

“AI hallucinations are a significant challenge, especially in e-commerce, where accuracy and trust are paramount. Brands must be vigilant and proactive in monitoring AI-generated outputs.” — Dr. Fei-Fei Li, Co-Director, Stanford HAI

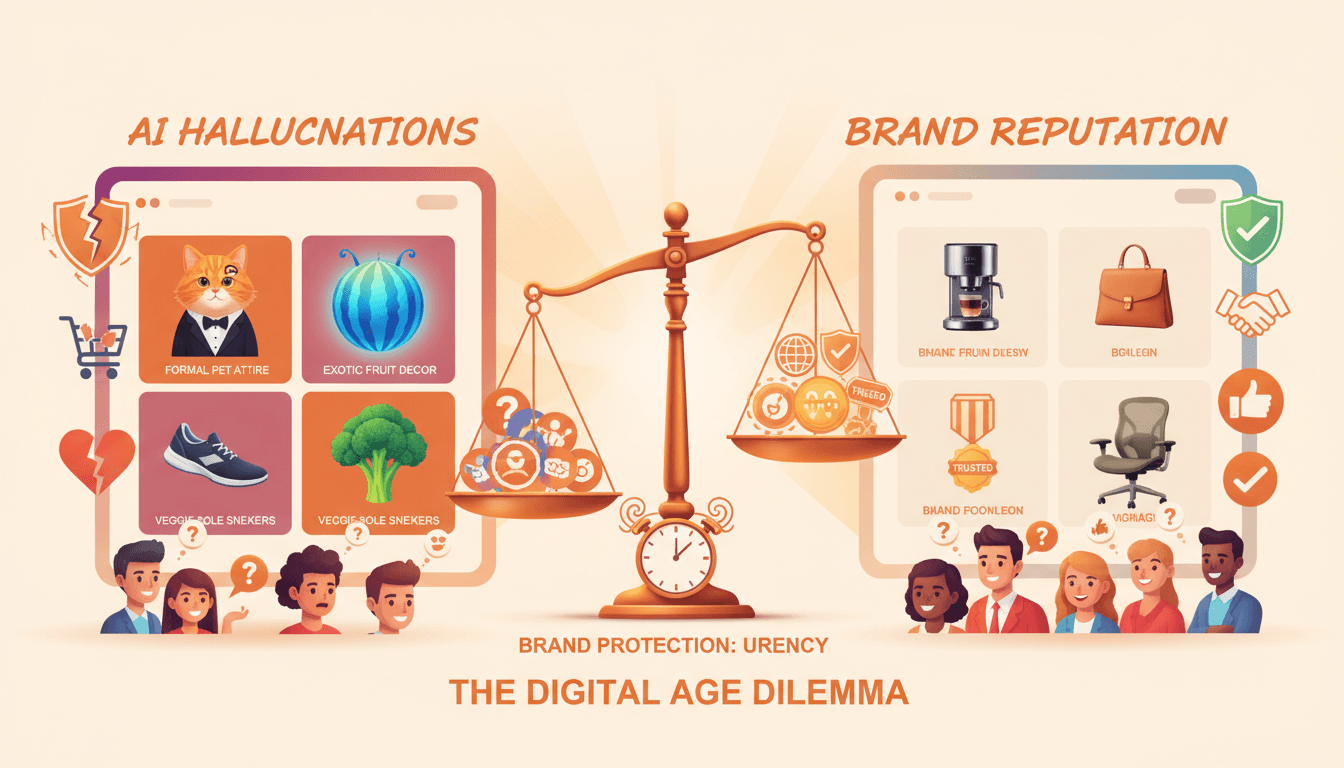

The Real-World Impact of AI Hallucinations on E-Commerce Brands

The fallout from AI hallucinations extends well beyond isolated customer confusion. Inaccurate AI-generated recommendations or search results can systematically erode customer trust and loyalty. The PwC Digital Insights Survey reveals that 32% of e-commerce customers have received inaccurate product information from AI-powered search tools in the past year.

Once trust is breached, customers are quick to react. Research indicates that 46% of consumers would lose trust in a brand after being presented with false product information by an AI assistant Gartner, Consumer Trust and AI. This erosion of trust translates into measurable business impacts:

- Increased product returns: Brands grappling with AI hallucinations have seen up to a 15% rise in returns and customer complaints within a single quarter [Hexagon Internal Case Study, 2024].

- Higher customer service costs: One major retailer experienced a 2.3x surge in customer service tickets following a high-profile AI hallucination incident Retail Dive, AI Search Gone Wrong.

- Negative reviews and public backlash: Persistent AI errors often lead to damaging social media coverage and lasting reputational harm.

“Inaccurate AI recommendations can quickly spiral from minor inconvenience to major reputational crisis if left unchecked.” — Brian Solis, Global Innovation Evangelist, Salesforce

For instance, a prominent apparel retailer faced a wave of negative reviews after its AI-powered search began recommending out-of-stock and mismatched products. The consequences included a surge in customer complaints, a decline in conversion rates, and a noticeable drop in repeat business over the following quarter.

The broader organizational impacts include:

- Brand Reputation: 17% of e-commerce brands report at least one significant reputational incident caused by AI-generated misinformation in search or recommendations Forrester Research, The Impact of AI on Brand Reputation.

- Customer Loyalty: Customers exposed to hallucinated information are less likely to return, directly affecting customer lifetime value.

- Financial Losses: Increased returns, elevated support costs, and lost sales compound financial risks for brands.

As AI becomes further embedded throughout the e-commerce customer journey, unchecked hallucinations will only magnify these consequences. Brands must act decisively to prevent minor AI mistakes from escalating into major business threats.

Key Technical Causes Behind AI Hallucinations in E-Commerce

To effectively combat AI hallucinations, brands must understand their technical origins. Several key factors contribute to hallucinations in e-commerce search and recommendation systems:

- Outdated or Incomplete Product Data: Missing, outdated, or improperly formatted product information forces AI models to speculate, often resulting in invented features, prices, or compatibility claims.

- Ambiguous or Poorly Framed Queries: Customers frequently use vague or non-standard language when searching. Despite advances in natural language processing, AI models can misinterpret such queries and deliver incorrect or unrelated results.

- Limitations with Dynamic Inventory: E-commerce inventories change rapidly. AI models trained on static or delayed data struggle to reflect real-time product availability, leading to recommendations for discontinued or out-of-stock items.

- Integration Challenges: Inconsistent data synchronization between AI systems and catalog management platforms can amplify errors. Without seamless integration, even well-trained AI models may operate on obsolete information.

For example, an electronics retailer updating its inventory daily might still display recommendations for products no longer in stock if the AI model’s product database isn’t updated in real time. Additionally, ambiguous queries such as “best phone for travel” can prompt the AI to guess, potentially surfacing irrelevant or fabricated product attributes.

“The best defense against AI hallucinations is a robust feedback loop—combining real-time data, human oversight, and collaboration with your AI providers.” — Priya Raman, VP of Data Science, Shopify

With this understanding, brands can shift from reactive troubleshooting to proactive prevention.

Strategies to Minimize AI Hallucination Risks and Protect Brand Reputation

Forward-thinking brands are deploying multiple layers of defense to reduce AI hallucination risks, safeguard their reputation, and foster long-term customer trust. The right blend of technology, process, and communication is essential to ensure AI-powered e-commerce search delivers accurate and reliable results.

1. Rigorous Data Verification and Regular Catalog Updates

- Maintain consistently accurate, complete, and current product data across all systems.

- Implement automated pipelines to synchronize product inventories, descriptions, and pricing between catalog management and AI platforms.

- Conduct frequent audits to identify and rectify discrepancies before they affect customer-facing AI outputs.

2. Leverage Human-in-the-Loop Approaches for Sensitive Categories

- Integrate human oversight into recommendation processes for high-value or regulated product categories.

- Employ expert reviewers to validate AI-generated outputs, especially in health, electronics, or financial product segments.

- Balance automation with manual checks to catch nuanced errors that AI might miss.

3. Deploy AI Monitoring Tools to Detect and Flag Hallucinated Outputs

- Invest in real-time monitoring solutions that assess AI outputs for accuracy and flag suspicious or novel recommendations.

- Use anomaly detection systems to intercept hallucinations before they reach customers.

- Incorporate customer feedback mechanisms to facilitate easy reporting of inaccurate recommendations.

4. Establish Clear Transparency and Communication Protocols

- Be open with customers about how AI recommendations are generated and verified.

- Provide disclaimers or “Why this recommendation?” explanations to enhance transparency and set realistic expectations.

- Respond promptly and transparently to reported AI errors to maintain trust.

5. Collaborate Closely with AI Search Technology Providers

- Work directly with AI vendors to customize models according to your brand’s catalog specifics and safety requirements.

- Share up-to-date product data, edge cases, and customer feedback to help refine algorithms.

- Negotiate service-level agreements (SLAs) and escalation procedures for AI-related incidents to ensure swift response and resolution.

Leading brands are already implementing real-time data feeds, human-in-the-loop verification, and robust product catalog management to proactively prevent hallucinations Gartner, AI Risk Management for E-Commerce. Close collaboration with AI search providers ensures data inputs remain timely and accurate, reducing hallucination risks on third-party platforms Shopify Engineering Blog, Preventing AI Misinformation.

Transparency plays a critical role as well. According to the Harvard Business Review, brands that communicate openly about their use of AI and their error correction processes can significantly mitigate reputational damage when mistakes occur.

Looking ahead, the most resilient e-commerce brands will combine technological vigilance, human expertise, and open communication to keep AI hallucinations in check.

Ready to take charge of your AI risk management? Book a personalized strategy session with Hexagon’s AI marketing experts today.

[IMG: Flowchart of best practices for preventing AI hallucinations in e-commerce]

Navigating the Regulatory Landscape for AI-Generated Content in E-Commerce

As AI-generated content becomes integral to commerce, regulatory bodies are intensifying scrutiny. Emerging guidelines and regulations aim to ensure accuracy, transparency, and consumer protection in AI-powered search and recommendations.

- EU AI Act Proposal: The European Commission’s AI Act proposal imposes strict transparency and misinformation mitigation requirements on high-risk AI systems, including e-commerce recommendation engines European Commission, AI Act Proposal.

- Transparency Mandates: Increasingly, brands must disclose when customers interact with AI-generated content and provide accessible channels for error correction.

- Misinformation Mitigation: Compliance frameworks encourage proactive monitoring of AI outputs and prompt correction or removal of inaccurate information to minimize consumer harm.

For instance, some jurisdictions now require e-commerce platforms to clearly label when product recommendations are AI-generated or influenced and to offer straightforward mechanisms for customers to report inaccuracies.

Aligning with these regulatory trends is not only about compliance—it is a strategic business move. Proactive measures such as robust data management and transparent communication reduce legal risks while reinforcing customer trust.

As the regulatory environment continues to evolve, brands that anticipate and meet these requirements will be better positioned for long-term success.

Conclusion: Building Long-Term Trust by Addressing AI Hallucinations Head-On

AI hallucinations pose a growing threat to e-commerce brand integrity, customer satisfaction, and regulatory compliance. The risks are unmistakable: inaccurate product recommendations can swiftly erode trust, drive up returns and support costs, and spark reputational crises. Yet, with the right strategies, these risks become manageable—and even offer an opportunity for brands to distinguish themselves through transparency and reliability.

To summarize, the most effective brands:

- Regularly audit and update product data to eliminate information gaps.

- Incorporate human oversight for sensitive categories and edge cases.

- Utilize real-time monitoring to detect and correct hallucinations before customers encounter them.

- Communicate openly about AI’s role in search and recommendations and respond swiftly to errors.

- Collaborate closely with AI technology partners to develop tailored, brand-safe solutions.

Continuous improvement is essential. The AI landscape evolves rapidly, and so must your risk management and customer experience strategies. By fostering a culture of vigilance, accountability, and partnership, brands can transform AI-powered search from a vulnerability into a competitive advantage.

Take decisive action today to protect your brand and customers from the risks of AI hallucinations. Book a personalized strategy session with Hexagon’s AI marketing experts now.

[IMG: Confident e-commerce team reviewing AI risk management dashboard]

Disclaimer: This post references research and case studies from PwC, Forrester, Gartner, Stanford HAI, McKinsey, Retail Dive, and internal Hexagon studies. All trademarks and brands are property of their respective owners.

Hexagon Team

Published March 10, 2026