Multimodal AI Search and Its Transformative Impact on E-Commerce Product Discovery: A 2026 Industry Analysis

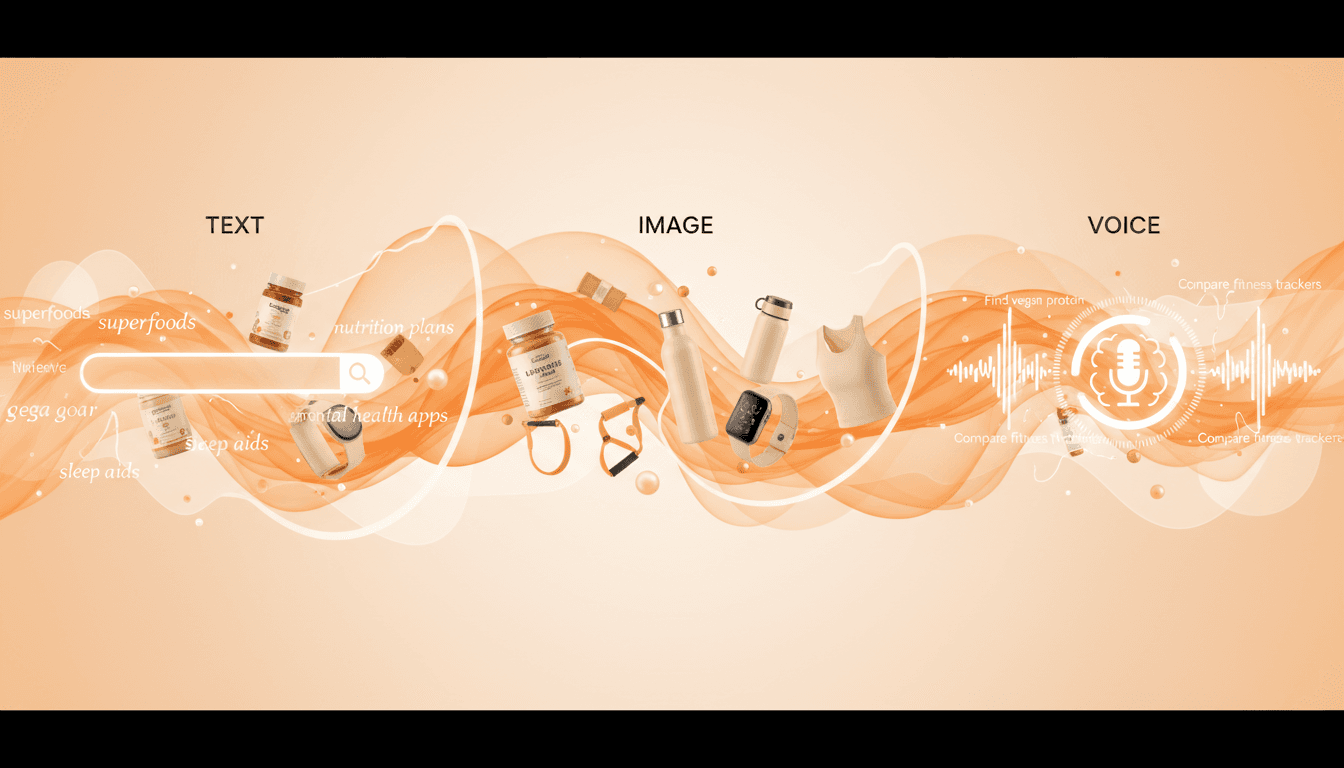

By 2026, multimodal AI search will be the cornerstone of e-commerce product discovery, blending text, voice, and image to create seamless, personalized shopping experiences. This in-depth industry analysis reveals how leading brands are leveraging Generative Engine Optimization (GEO) to stay ahead and what it takes to future-proof your e-commerce strategy.

Multimodal AI Search and Its Transformative Impact on E-Commerce Product Discovery: A 2026 Industry Analysis

By 2026, multimodal AI search will become the cornerstone of e-commerce product discovery, seamlessly integrating text, voice, and image inputs to craft personalized and intuitive shopping experiences. This comprehensive industry analysis explores how top brands leverage Generative Engine Optimization (GEO) to stay competitive and outlines what it takes to future-proof your e-commerce strategy.

[IMG: Diverse group of online shoppers using voice, text, and image search on various devices]

Imagine searching for a product not just by typing keywords but by snapping a photo, speaking naturally, or combining both—all tailored precisely to your preferences. This is no longer a distant vision. By 2026, 30% of all e-commerce searches are projected to be voice-driven, and 70% of fashion and beauty product queries now involve an image component. Multimodal AI search is fundamentally reshaping how consumers discover products online. In this analysis, we unpack what multimodal AI search entails, how it is revolutionizing product discovery, and the GEO strategies essential for staying ahead.

Ready to future-proof your e-commerce product discovery with cutting-edge multimodal AI strategies? Book a personalized 30-minute consultation with Hexagon’s AI marketing experts today.

Understanding Multimodal AI Search: Definition and Evolution

Multimodal AI search empowers users to find products using a blend of text, voice, and images, offering a more natural and personalized shopping journey. Unlike traditional search engines that rely solely on typed keywords, multimodal systems can interpret spoken commands, analyze uploaded photos, and comprehend nuanced textual prompts—often simultaneously. This multidimensional approach is revolutionizing the e-commerce landscape.

The journey began with text-only queries, then expanded to include images through reverse image search, and more recently embraced voice inputs. Today’s leading AI engines—such as ChatGPT, Gemini, and Perplexity—use large-scale transformer models trained on diverse datasets, enabling them to process and interlink text, images, and spoken queries seamlessly (OpenAI Technical Report). According to Gartner, 60% of Gen Z consumers now use at least two modalities—text, image, or voice—when searching for products online.

Why is this shift so significant? As Microsoft CEO Satya Nadella puts it, “Multimodal AI search is not just about convenience; it’s about redefining the boundaries of online product discovery and ushering in a new era of truly personalized commerce.” Today’s consumers expect to find products in the way that feels most natural—whether snapping a photo, speaking a brand name, or typing a detailed description.

Multimodal AI search enhances product discovery and customer engagement in several key ways:

- Increased accessibility: Shoppers can choose the modality that fits their environment or preference.

- Faster, more accurate results: AI engines combine visual and contextual cues to deliver precise recommendations.

- Personalized journeys: Multimodal search adapts dynamically to individual shopping behaviors, crafting unique discovery paths.

Looking forward, e-commerce brands that embrace multimodal AI search will be best positioned to capture the attention of modern, digital-native consumers.

How Leading AI Engines Process and Integrate Multimodal Inputs

Beneath the surface, today’s premier AI search engines harness sophisticated models to synthesize text, image, and voice data for unparalleled search relevance. These platforms leverage large language models (LLMs) alongside vision-language transformers, which cross-reference and align data across modalities in real time. This complex architecture forms the backbone of next-generation product discovery.

Consider an AI engine receiving an uploaded photo of a sneaker, a spoken phrase such as “red Nike running shoes,” and a typed size preference. The multimodal architecture deciphers each input, correlates the data, and surfaces the most relevant products. Demis Hassabis, CEO of Google DeepMind, highlights, “AI engines that understand and interlink text, image, and voice inputs are fundamentally transforming how brands engage customers online.”

Generative AI further enhances this capability. Context-aware algorithms fill information gaps—inferring style from images or clarifying ambiguous voice queries. These models underpin Generative Engine Optimization (GEO), a specialized discipline focused on optimizing product data so AI engines can better understand and recommend products across modalities.

Leading AI engines achieve this through:

- Multimodal embeddings: Creating shared representations for text, image, and voice inputs.

- Cross-modal reasoning: Employing attention mechanisms to align content across different input types.

- Real-time adaptation: Continuously learning from user interactions to boost relevance.

The results speak volumes: Brands adopting multimodal GEO strategies report a 45% increase in AI-assisted product discovery (Forrester). As these engines evolve, mastering GEO will be critical for e-commerce success.

Sector-Specific Impacts of Multimodal AI Search in E-Commerce

Multimodal AI search does not offer a one-size-fits-all solution. Its impact varies across sectors, with fashion, beauty, home decor, electronics, and grocery each harnessing distinct facets of this technology to enrich product discovery.

In fashion and beauty, visual search dominates. Pinterest Business Insights reveals that 70% of product queries now include an image component. Consumers upload photos of desired styles or colors, combine them with voice queries like “find similar in my size,” and receive highly tailored recommendations. As Raja Rajamannar, Chief Marketing & Communications Officer at Mastercard, states, “Multimodal search is already proving its value in sectors like fashion and beauty, where visual input is essential. The next challenge is making every product discoverable, regardless of how users search.”

[IMG: Shopper using image and voice search to find a dress on a mobile device]

For home decor and electronics, multimodal AI enables deeper contextual understanding. Shoppers may upload a photo of their living room, describe the aesthetic they want, and specify dimensions via text. AI engines interpret these layers holistically, suggesting products that align with both style and technical requirements.

In the grocery sector, voice-driven search is rapidly gaining traction. Shoppers ask for “organic gluten-free pasta,” scan barcodes, or photograph empty packaging. By 2026, voice-driven product queries are expected to constitute 30% of all e-commerce searches (Voicebot.ai).

Key sector-specific user behavior patterns include:

- Fashion & beauty: Heavy reliance on image and voice inputs, driven by trend-focused searches.

- Home decor & electronics: Multimodal queries emphasizing compatibility, dimensions, and style cohesion.

- Grocery: Fast, convenience-oriented queries often blending voice and text.

Platforms embracing multimodal AI search report a 28% reduction in cart abandonment rates, thanks to more accurate recommendations and frictionless user journeys (Adobe Digital Economy Index). This underscores a vital lesson: tailoring multimodal search experiences to sector-specific needs delivers measurable business impact.

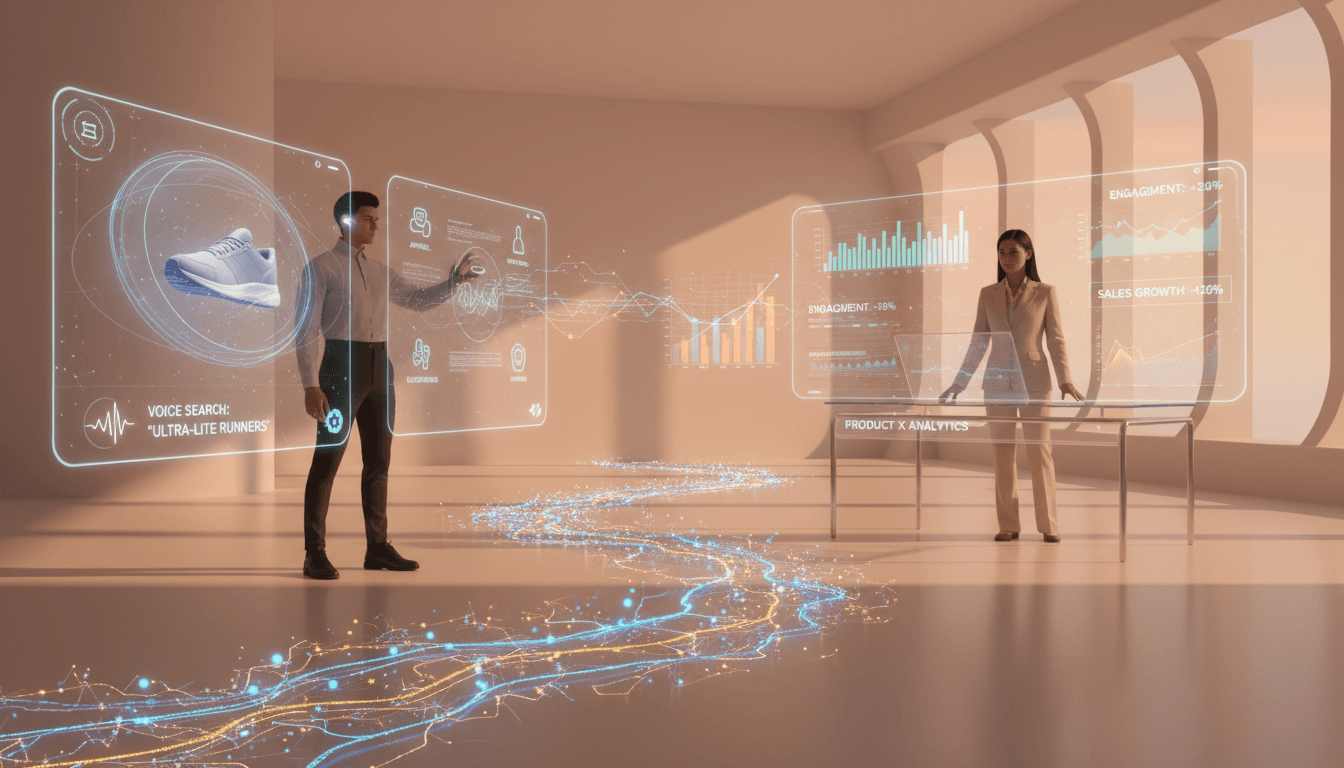

Case Studies: Brands Successfully Leveraging Multimodal GEO

Leading brands like Sephora and Nike provide compelling models of how multimodal GEO is transforming e-commerce product discovery.

Sephora has been at the forefront, integrating image and voice search through its Virtual Artist and mobile apps. Customers upload selfies to receive AI-powered color matching and use voice commands to explore tutorials or request recommendations. This seamless blend has boosted engagement and conversion rates, encouraging shoppers to spend more time exploring personalized beauty looks.

Nike, meanwhile, employs multimodal AI search both online and in physical stores. Customers can snap photos of footwear, use voice input to specify preferences (“waterproof trail running shoes”), and instantly access matching products. Nike’s mobile app enhances this experience further, streamlining discovery, increasing upsell opportunities, and fostering brand loyalty.

[IMG: Sephora app interface showing image-based search and AI-powered product recommendations]

Key takeaways from these pioneers include:

- Invest in AI-ready media assets: High-quality images, descriptive alt text, and voice-friendly content are essential.

- Integrate multimodal capabilities across all customer touchpoints: From websites to mobile apps to physical stores.

- Analyze user behavior across modalities: Use insights to continually refine search relevance and personalization.

Ultimately, brands that embed multimodal GEO into their operations see not only higher engagement but also significant gains in conversion rates and customer satisfaction.

Best Practices for Optimizing Product Data for Multimodal AI Engines

Unlocking the full potential of multimodal AI search requires brands to optimize product data for text, image, and voice queries. Here are proven strategies to make your catalog AI-ready:

- Enrich metadata: Provide detailed, structured product information including size, color, material, and style.

- Leverage high-quality images: Offer multiple angles, zoom capabilities, and consistent backgrounds. Tag images with descriptive alt text and captions.

- Craft voice-friendly descriptions: Use natural language that anticipates how consumers phrase spoken queries.

- Implement structured data markup: Utilize schema.org and other standards to make product attributes machine-readable.

- Standardize data formats: Maintain consistent taxonomy and labeling across all product listings for smoother AI interpretation.

[IMG: Product data dashboard showing structured metadata, image optimization, and voice-ready descriptions for an e-commerce catalog]

A well-optimized product page typically includes:

- Text descriptions featuring conversational keywords.

- Images labeled with relevant alt text (e.g., “red running shoe, side view”).

- Audio snippets or guides for complex products.

- Structured data that highlights key attributes such as size, fit, and color.

Aligning product content with generative engine requirements ensures AI models can accurately parse, relate, and recommend products. As Search Engine Journal advises, “Brands must structure product data for machine readability, employ rich media, and ensure voice-friendly content” to thrive in multimodal search environments.

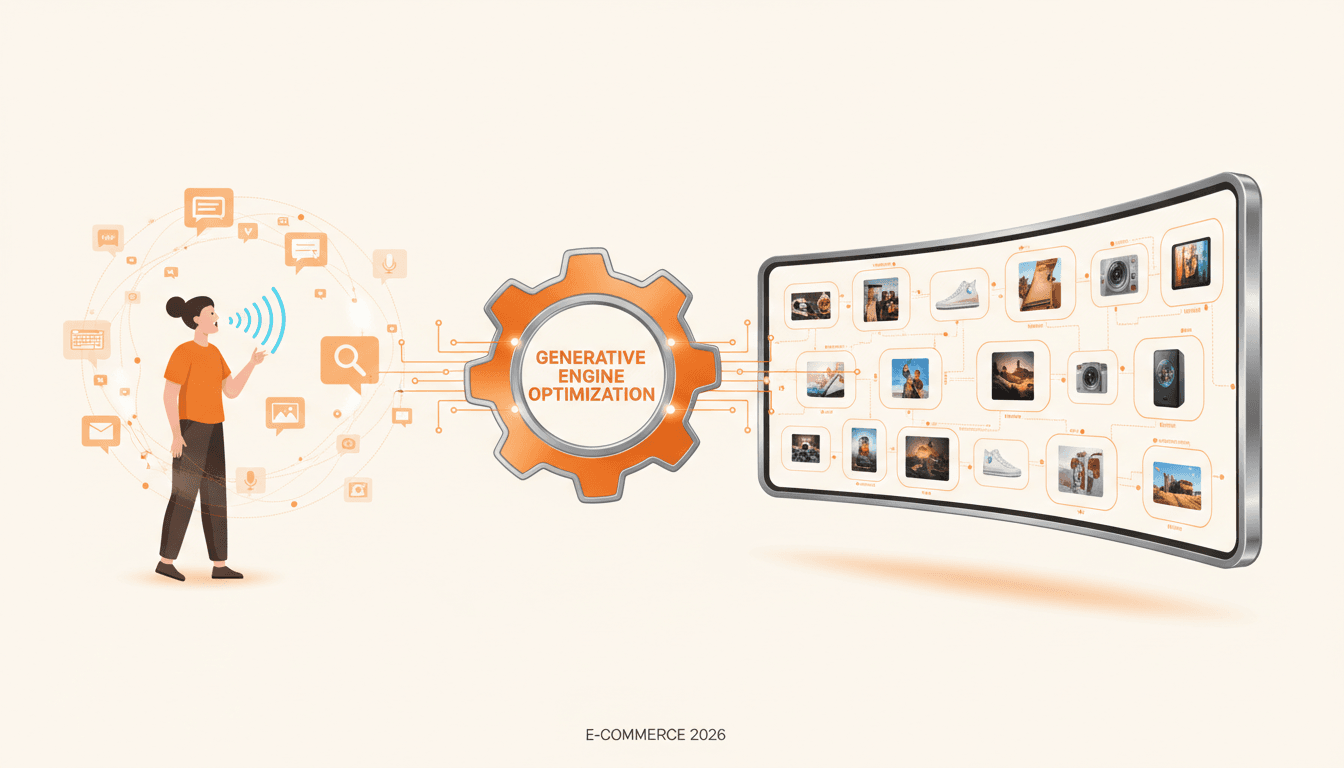

Generative Engine Optimization (GEO) Strategies for Multimodal Queries

Generative Engine Optimization (GEO) represents the next frontier of SEO—specifically designed for the multimodal AI ecosystem. GEO focuses on tailoring product data to generative models capable of interpreting text, voice, and image inputs simultaneously.

To implement GEO effectively:

- Text optimization: Use natural, conversational language; anticipate voice phrasing; include long-tail keywords suited to spoken and conversational searches.

- Image optimization: Provide high-resolution, accurately labeled images; use descriptive alt text and relevant metadata; ensure visual features are easily interpretable by AI.

- Voice optimization: Write concise, clear product names and descriptions; avoid jargon; address common customer questions in Q&A formats.

[IMG: Diagram of GEO workflow, showing integration of text, image, and voice optimization steps]

AI content generation tools can accelerate GEO efforts by:

- Automatically producing voice-friendly copy from product specifications.

- Tagging images with machine-readable labels.

- Creating dynamic, personalized product descriptions tailored to different user profiles.

Marketers can leverage frameworks and tools such as Google’s Multimodal Transformer APIs, OpenAI GPT-4 Vision, and custom data pipelines to streamline GEO implementation.

The rewards are significant: Brands adopting multimodal GEO report a 45% increase in product discovery (Forrester). As International SEO Consultant Aleyda Solis warns, “Brands that fail to embrace multimodal GEO risk falling behind as consumers increasingly expect to search and shop using the modality that suits them best in the moment.”

Challenges and Future Directions in Multimodal Product Discovery

Despite its transformative promise, multimodal AI search faces several challenges—technical complexity, privacy concerns, and data quality issues among them. Integrating diverse data sources, ensuring consistent labeling, and maintaining accurate metadata at scale remain significant hurdles. Privacy is particularly sensitive regarding voice data and customer images.

Key technical challenges include:

- Data fragmentation: Disconnected systems hinder the creation of unified, AI-ready datasets.

- Noise and ambiguity: Voice queries can be misinterpreted; images may lack sufficient context.

- Bias and fairness: AI models must be trained to avoid perpetuating stereotypes or marginalizing minority user preferences.

Looking ahead, the future will be shaped by deeper personalization and real-time multimodal interactions. AI engines will adapt continuously to individual preferences, learning from every interaction to deliver ever more tailored product recommendations.

The role of AI marketing strategists will evolve accordingly. Success will demand fluency in data science, content creation, and cross-functional collaboration—a new breed of marketing leader who bridges human creativity with AI-driven insights and automation.

Actionable Recommendations for E-Commerce Marketing Strategists

To seize the opportunities of the multimodal AI revolution, e-commerce strategists should take these steps:

- Audit your current product data: Identify gaps in metadata, imagery, and voice-readiness.

- Foster cross-functional collaboration: Align marketing, IT, and product teams around multimodal GEO priorities and execution.

- Focus on high-impact categories first: Target sectors like fashion, beauty, or home decor where multimodal search has the greatest effect.

- Invest in AI-ready content: Upgrade product images, enrich metadata, and craft voice-friendly descriptions.

- Leverage AI tools: Employ content generation and tagging solutions to automate and scale GEO.

- Monitor performance closely: Set KPIs such as discovery rates, engagement, and cart abandonment. Use analytics to refine and optimize.

- Educate your team: Stay abreast of evolving AI trends and GEO best practices.

[IMG: Marketing team workshop focused on optimizing e-commerce product data for multimodal AI search]

To drive cross-team success:

- Establish shared goals and metrics for multimodal search optimization.

- Document GEO workflows clearly, so all stakeholders understand their roles.

- Encourage experimentation: Pilot new search modalities and gather user feedback.

Finally, measure success by tracking discovery rates, conversion rates, and customer satisfaction. Remember, multimodal AI search is a moving target—agility and continuous optimization are essential to staying ahead.

Conclusion

Multimodal AI search is revolutionizing e-commerce product discovery by combining the power of text, voice, and image to deliver truly personalized shopping experiences. As 2026 approaches, brands that embrace Generative Engine Optimization (GEO) and invest in AI-ready product data will win the loyalty of digital-native shoppers and outpace competitors.

Ready to future-proof your e-commerce product discovery with cutting-edge multimodal AI strategies? Book a personalized 30-minute consultation with Hexagon’s AI marketing experts today.

Stay ahead of the curve—begin your multimodal GEO journey now and shape the future of e-commerce.

Hexagon Team

Published March 27, 2026