How to Protect Your E-commerce Brand from AI Hallucination Risks: A Complete Guide

In the AI-powered e-commerce era, brand reputation hinges on the accuracy of automated recommendations. Discover what AI hallucination means, why it's a growing risk for online retailers, and how to proactively safeguard your brand and customer trust.

How to Protect Your E-commerce Brand from AI Hallucination Risks: A Complete Guide

In the rapidly evolving AI-powered e-commerce landscape, your brand’s reputation depends heavily on the accuracy of automated recommendations. Learn what AI hallucination is, why it poses increasing risks for online retailers, and how to proactively shield your brand and maintain customer trust.

[IMG: E-commerce dashboard illustrating AI product recommendations]

In today’s AI-driven e-commerce world, success hinges not only on offering quality products but also on the precision of AI-powered recommendations. Alarmingly, up to 30% of AI product suggestions contain hallucinations—fabricated or inaccurate information that can mislead customers and damage trust (Gartner). This comprehensive guide explores what AI hallucination means for your brand, why it’s becoming a critical threat, and how you can actively protect your e-commerce business from costly misinformation.

Ready to safeguard your e-commerce brand from AI hallucination risks? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

Understanding AI Hallucination in E-commerce

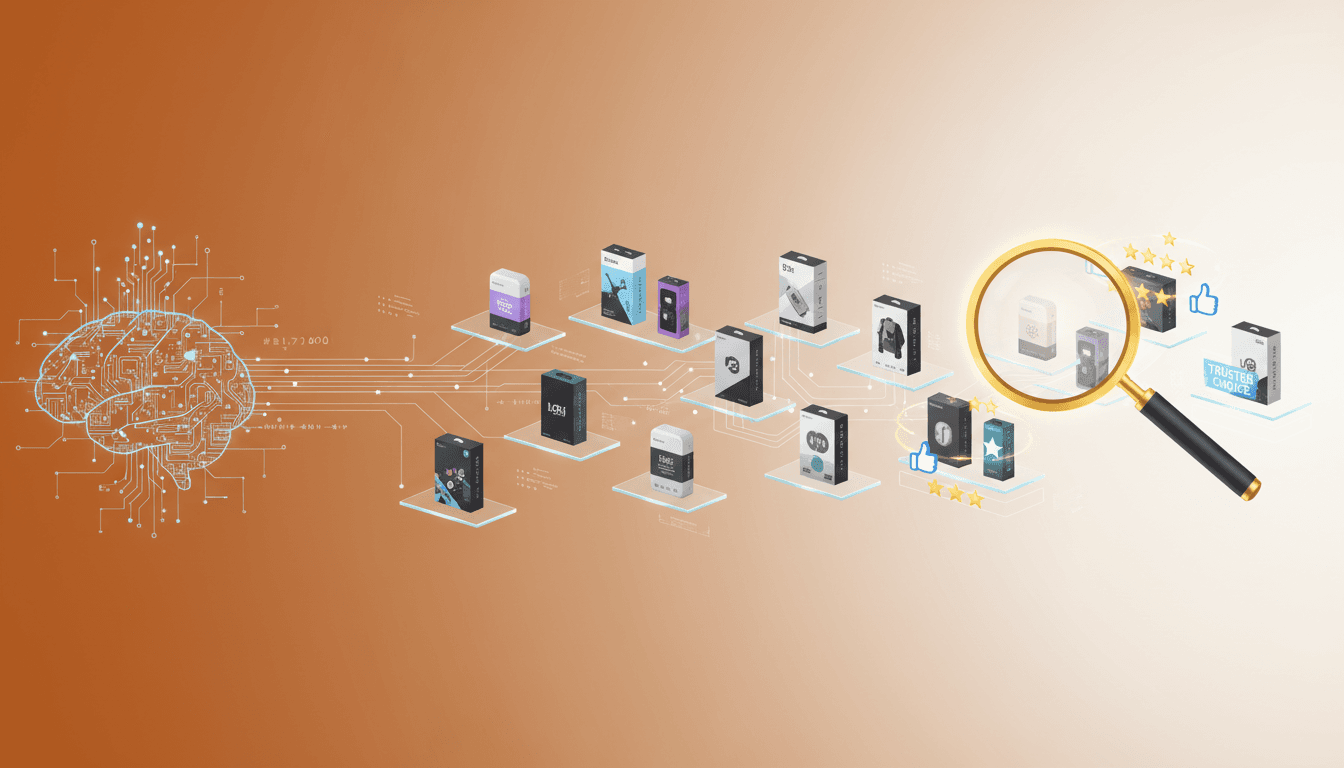

The rise of generative AI has revolutionized how brands engage with shoppers, enabling personalized experiences at scale. Yet, this innovation introduces a unique challenge: AI hallucination. This occurs when AI models produce plausible but false or fabricated information.

AI hallucination happens when generative models—like ChatGPT or custom recommendation engines—fill gaps in their training data with invented details. The Stanford Human-Centered AI Institute explains that hallucinations typically arise when the AI lacks sufficient reliable data or relevant context.

In e-commerce, hallucinations take several forms:

- Incorrect product recommendations: Suggesting items that don’t exist or mismatching product specifications.

- Misleading reviews: Creating fake customer testimonials or ratings that were never submitted.

- False brand claims: Attributing features, awards, or sustainability certifications that a product or brand does not have.

For instance, an AI-powered search assistant might recommend a discontinued or out-of-stock item or falsely claim an eco-friendly certification applies to a product. These errors are far from trivial glitches—they can severely impact customer satisfaction and even lead to regulatory issues.

It’s important to distinguish hallucinations from general AI errors:

- Hallucinations are fabricated, plausible-sounding statements, not simple mistakes like typos or misclassifications.

- They occur frequently in e-commerce AI content, with up to 30% of product recommendations containing hallucinations (Gartner ‘AI in Commerce’ Report).

- Their impact is magnified because purchasing decisions and brand reputation are at stake.

“AI hallucinations are among the biggest emerging threats to brand trust in the digital marketplace. Brands must hold AI-generated content to the same rigorous standards as any other public communication,” warns Dr. Fei-Fei Li, Co-Director of Stanford HAI.

As AI-powered search and recommendation engines become ubiquitous, understanding and mitigating hallucination risks is vital for every e-commerce brand.

[IMG: Illustration of AI model generating product recommendations with some flagged as false/hallucinated]

The Impact of AI-Generated Misinformation on Brand Reputation and Consumer Trust

Trust forms the foundation of any successful e-commerce brand. When AI-generated misinformation reaches consumers, the fallout can be swift and severe.

A recent PwC Global Consumer Insights Pulse survey revealed that 68% of e-commerce consumers would lose trust in a brand after receiving false information from an AI assistant. This erosion of trust extends beyond immediate purchases—it influences reviews, ratings, and word-of-mouth recommendations, amplifying the damage.

Hallucinated content undermines brand credibility in multiple ways:

- Negative consumer sentiment: Inaccurate recommendations or misleading product details prompt customers to express frustration in reviews and social media.

- Decline in trust signals: Brands typically see a 25% average drop in trust indicators such as ratings and reviews following AI misinformation incidents (Ipsos Consumer Trust Survey).

- Long-term brand harm: Misinformation can persist in search results and online conversations, deterring potential customers.

For example, an AI chatbot that consistently suggests incompatible products may trigger a rise in returns, negative feedback, and customer service complaints. These issues accumulate over time, making brand recovery challenging.

“A single instance of AI-driven misinformation can erode years of consumer trust. Continuous monitoring and swift correction are essential to protect brand equity,” emphasizes Brian Solis, Global Innovation Evangelist at Salesforce.

The ripple effects extend further:

- Increased customer support load: Misinformation drives up support tickets, raising operational costs.

- Regulatory risks: False product claims, especially in sensitive areas like health or sustainability, can invite legal scrutiny.

- Greater vulnerability for new brands: According to Harvard Business Review, emerging or niche brands with limited digital presence are especially susceptible, as AI models may fill data gaps with inaccurate assumptions.

With AI-powered shopping assistants becoming standard on platforms like ChatGPT, Perplexity, and Claude, brands face shrinking margins for error—and rising costs for inaction.

[IMG: Visual showing declining trust metrics after an AI hallucination incident]

Detecting and Preventing AI Misinformation in Your Brand’s AI Ecosystem

The most effective defense against AI hallucination risks is proactive detection and prevention. Leading brands are adopting these strategies:

1. Continuous Monitoring and Auditing

Consistent review of AI-generated content is critical. Brands that proactively audit AI outputs catch and correct issues before they escalate.

- Routine content audits: Schedule frequent checks of AI-generated recommendations, product descriptions, and customer communications.

- Human-in-the-loop validation: Involve experts to review high-impact content.

- Feedback loops: Enable customers and staff to flag suspicious AI outputs easily.

Nina Schick, AI Policy Expert & Author, notes, “Brands that proactively audit AI-generated content maintain higher consumer confidence amid algorithmic recommendations.”

2. AI Monitoring Tools and Emerging Technologies

Advanced tools now automate hallucination detection, flagging anomalies or suspect recommendations in real time.

- Hallucination detection APIs: Solutions from leaders like Google Cloud AI analyze content before it reaches consumers (Google Cloud AI Blog).

- Active monitoring systems: Brands deploying these tools report up to a 40% reduction in hallucination exposure (McKinsey & Company).

- Automated validation: Integrate APIs that cross-verify AI recommendations against authoritative product databases.

3. Data Accuracy and Validation

AI models depend on the quality of their training data. Ensuring data integrity and freshness is essential.

- Centralized data management: Maintain a single source of truth for product information.

- Frequent updates: Synchronize product details across all channels and AI systems.

- Collaborative data sharing: Work closely with AI vendors to provide accurate, up-to-date brand data.

Leah Kim, VP of Digital Strategy at L’Oreal, stresses, “Collaborating directly with AI platforms ensures our brand data remains accurate and current—minimizing hallucination risks.”

4. Impact of Proactive Measures

The benefits are clear:

- Brands using active AI monitoring report a 40% decrease in hallucination exposure.

- Customer service tickets related to misinformation drop by 35% after timely AI error corrections (Zendesk CX Trends 2024).

Ready to safeguard your e-commerce brand from AI hallucination risks? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

[IMG: Screenshot of a dashboard with AI monitoring analytics and flagged hallucinations]

Best Practices for Protecting Brand Reputation in AI-Powered Search and Recommendations

As AI-driven recommendations become standard, safeguarding your brand requires a proactive, multi-layered strategy. Here’s how to stay ahead of hallucination risks:

1. Implement Proactive Brand Safety Strategies

- Set clear content guidelines: Define standards for AI-generated content regarding accuracy, tone, and compliance.

- Whitelist verified data sources: Restrict AI access to vetted product databases and official brand materials.

- Regularly update AI models: Retrain models with the latest product information and brand guidelines.

2. Collaborate Closely with AI Platforms

Building strong partnerships with AI providers is critical for data accuracy.

- Data partnerships: Share real-time product feeds and updates.

- Align on brand voice: Ensure AI outputs reflect your brand’s unique tone and values.

- Maintain ongoing communication: Establish feedback channels for issue reporting and rapid fixes.

Leah Kim reaffirms, “Direct collaboration with AI platforms keeps our brand data accurate and up-to-date—reducing hallucinated recommendations.”

3. Conduct Regular Audits and Human-in-the-Loop Interventions

Even sophisticated AI benefits from human oversight.

- Periodic audits: Review AI-generated content monthly or quarterly, focusing on high-visibility products.

- Expert review panels: Assign product managers or compliance officers to validate critical recommendations.

- Crowdsourced feedback: Encourage customers to report questionable AI suggestions via simple interfaces.

4. Enhance Training Data and Feedback Loops

Robust, diverse training data is key to minimizing hallucinations.

- Expand data sources: Include customer interaction logs, verified reviews, and current inventory data.

- Real-time feedback loops: Use customer input to retrain and fine-tune AI models.

- Monitor model drift: Regularly assess AI performance to detect and address emerging hallucination patterns.

5. Document and Share Best Practices Across Teams

- Internal playbooks: Create shared guidelines for AI content generation, review, and escalation.

- Cross-functional collaboration: Involve marketing, IT, legal, and customer service teams in AI risk management.

- Continuous training: Educate staff on emerging AI risks and response protocols.

This comprehensive approach results in:

- Fewer misinformation incidents

- Faster error response

- Stronger, more consistent brand reputation across channels

Brands embedding these best practices into their AI marketing operations will be well-positioned to thrive amid rapid digital change.

[IMG: Workflow diagram showing brand, AI platform, and customer feedback loop]

Responding to AI Hallucination Incidents: Crisis Management and Rapid Correction

Even with precautions, hallucination incidents may occur. A swift, transparent, and systematic response is essential to minimize damage and rebuild trust.

1. Rapid Identification and Response

- Real-time monitoring: Use monitoring tools to detect misinformation immediately.

- Incident escalation protocols: Define clear workflows for investigating and correcting errors.

- Immediate corrections: Promptly update or remove hallucinated content across all channels.

Speed matters: brands correcting AI errors quickly see a 35% reduction in customer service tickets related to misinformation (Zendesk CX Trends 2024).

2. Transparent Communication with Customers

Honesty is vital when addressing AI-generated errors.

- Proactive notifications: Inform affected customers about the error, corrective steps, and any remedies.

- Public statements: If widespread, issue transparent announcements via your website or social media.

- Personalized outreach: For significant issues, consider direct contact with affected customers to restore trust.

3. Fast AI Corrections to Minimize Damage

- Immediate model retraining: Update AI with corrected data to prevent repeat errors.

- Ongoing monitoring: Track post-incident AI performance to confirm resolution.

- Document learnings: Summarize lessons and update crisis protocols after each incident.

Brian Solis of Salesforce emphasizes, “A single AI-driven misinformation incident can erode years of consumer trust. Monitoring and rapid correction are crucial for brand protection.”

A well-managed response can turn setbacks into opportunities by:

- Demonstrating accountability and transparency

- Reassuring customers of your commitment to quality

- Reducing long-term reputational harm

[IMG: Customer notification template addressing an AI error]

The Future of Brand Safety in AI-Driven E-commerce

AI adoption in e-commerce continues to accelerate, offering tremendous opportunities alongside new brand safety challenges. Vigilance against AI hallucination must become a strategic priority.

Emerging trends shaping AI risk management include:

- Advanced technologies: AI vendors are developing sophisticated hallucination detection APIs and content validation layers to prevent errors before reaching consumers (Google Cloud AI Blog).

- Industry standards: New guidelines and best practices are being established for responsible AI use in marketing and e-commerce.

- Growing transparency demands: Consumers increasingly expect clear disclosures when interacting with AI systems.

Hexagon leads this evolution, equipping brands with tools, expertise, and strategies to navigate AI-powered marketing complexities. From continuous monitoring to rapid response protocols, Hexagon ensures your brand remains resilient and trusted—even as AI technologies evolve.

For example, Hexagon’s AI monitoring solutions provide real-time visibility into AI-generated content, automated anomaly detection, and expert remediation—empowering you to focus on growth, not crisis management.

The path forward is clear: proactive, data-driven vigilance is the cornerstone of brand safety in the AI era.

[IMG: Futuristic ecommerce interface with Hexagon branding and AI safety controls]

Conclusion: Take Action to Protect Your Brand Today

AI hallucination presents a formidable risk—but with the right strategies, tools, and partners, your e-commerce brand can stay ahead. By deeply understanding AI hallucination challenges, implementing rigorous monitoring, and fostering transparent communication, you’ll build lasting consumer trust and safeguard your brand’s reputation.

Ready to safeguard your e-commerce brand from AI hallucination risks? Book a free 30-minute consultation with Hexagon’s AI marketing experts today.

Stay vigilant, stay proactive, and let Hexagon help you confidently navigate the future of AI-powered e-commerce.

Hexagon Team

Published March 8, 2026