EU AI Act and Ecommerce: What Merchants Need to Know Before August 2026

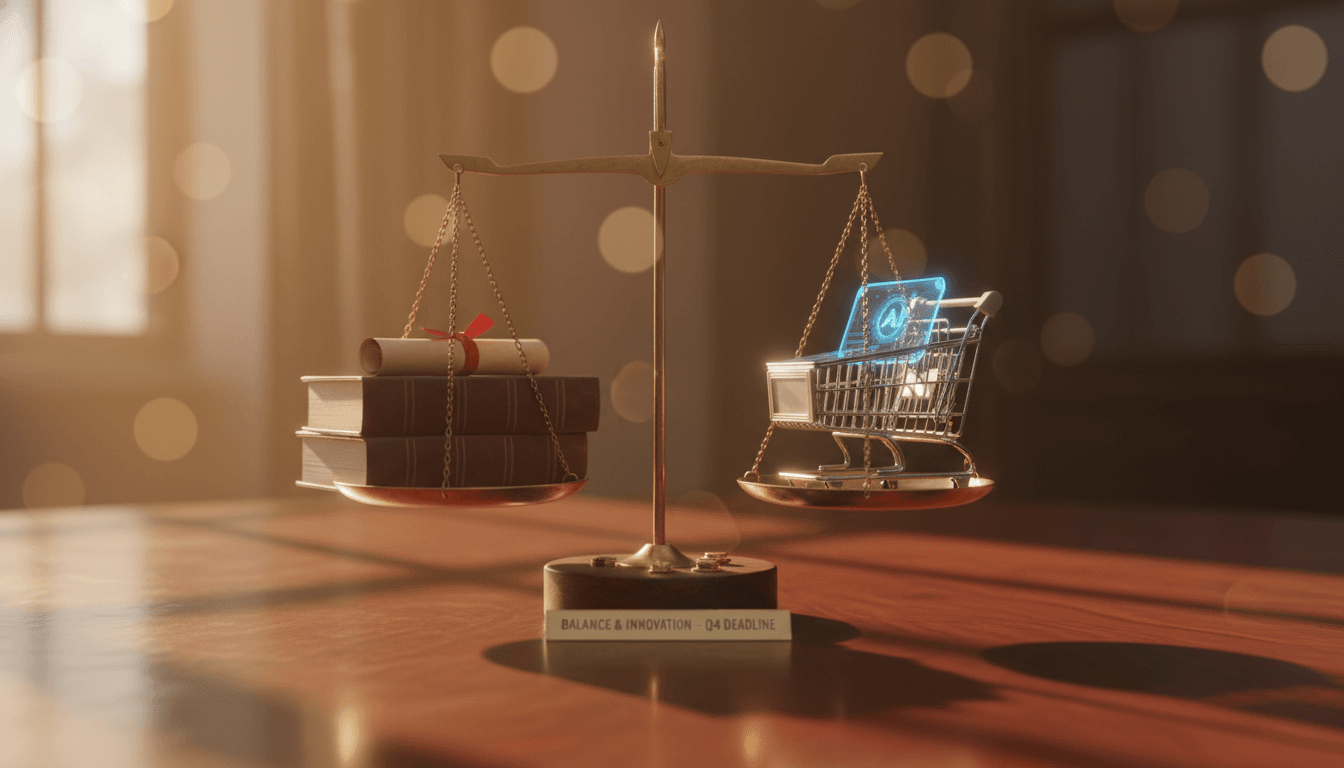

On August 2, 2026, the European Union's AI Act begins enforcing its high-risk AI system rules. For ecommerce businesses that sell to EU consumers, use AI-driven pricing, deploy recommendation engines, or operate autonomous purchasing agents, this is not a future concern. It is a compliance deadline

EU AI Act and Ecommerce: What Merchants Need to Know Before August 2026

Last updated: March 2026

On August 2, 2026, the European Union’s AI Act begins enforcing its high-risk AI system rules. For ecommerce businesses that sell to EU consumers, use AI-driven pricing, deploy recommendation engines, or operate autonomous purchasing agents, this is not a future concern. It is a compliance deadline with penalties that can reach EUR 35 million or 7% of global annual revenue, whichever is higher.

The first major fine under the AI Act has already landed. In March 2026, regulators issued a EUR 35 million penalty, signaling that enforcement is not theoretical. Meanwhile, Brazil’s LGPD levied over EUR 12 million in fines in Q1 2025 alone, and the Colorado AI Act takes effect on June 30, 2026.

If your organization uses AI in any customer-facing capacity, the window to prepare is closing. This guide breaks down what the EU AI Act means for ecommerce, how it intersects with GDPR, what other jurisdictions are doing, and the specific steps your team should take now.

What the EU AI Act Means for Ecommerce

The EU AI Act classifies AI systems into four risk tiers: unacceptable, high-risk, limited-risk, and minimal-risk. Ecommerce applications fall primarily into the high-risk and limited-risk categories. The enforcement timeline is staggered:

| Date | Milestone |

|---|---|

| February 2025 | Prohibited AI practices enforced |

| August 2025 | General-purpose AI model rules enforced |

| August 2, 2026 | High-risk AI system rules enforced |

| August 2027 | Full enforcement for all remaining AI systems |

Which Ecommerce Systems Qualify as High-Risk?

The Act does not contain provisions specifically drafted for ecommerce or agentic commerce. However, AI systems that influence financial decisions, handle sensitive consumer data, or make determinations that significantly affect individuals are likely classified as high-risk. In practical terms, the following ecommerce applications are at risk of classification:

- AI-driven credit or financing decisions at checkout (e.g., buy-now-pay-later scoring)

- Automated pricing engines that vary prices based on consumer profiling

- AI agents that autonomously execute purchases on behalf of consumers

- Recommendation systems that materially influence purchasing decisions, particularly when they process sensitive personal data (health, financial status, biometric inferences)

- Automated fraud detection systems that block transactions or flag accounts

High-risk classification triggers mandatory obligations around technical documentation, risk assessments, human oversight mechanisms, transparency disclosures, accuracy standards, and cybersecurity requirements.

A critical unresolved question remains: whether the “human oversight” requirement demands real-time human control at the moment of purchase, or whether pre-configured guardrails and post-hoc review satisfy the obligation. Until regulators issue formal guidance, organizations should err on the side of implementing meaningful human-in-the-loop mechanisms for significant purchasing decisions.

Penalties: The Financial Stakes

The AI Act’s penalty structure is graduated based on severity and risk classification:

| Violation Type | Maximum Penalty |

|---|---|

| Prohibited AI practices | EUR 35 million or 7% of global annual revenue |

| High-risk system non-compliance | EUR 15 million or 3% of global annual revenue |

| Providing incorrect information to authorities | EUR 7.5 million or 1% of global annual revenue |

For context, 7% of global annual revenue for a mid-size ecommerce company generating EUR 500 million in revenue would be EUR 35 million. For a large retailer generating EUR 5 billion, the exposure is EUR 350 million.

The First Major Fine Is Already Here

In March 2026, regulators levied a EUR 35 million fine under the AI Act, the first penalty at the maximum threshold. While the full details of the enforcement action are still emerging, the signal is unambiguous: European regulators intend to enforce aggressively from the start, not after a grace period.

This follows the pattern established by GDPR, where early, high-profile penalties (British Airways at GBP 20 million, H&M at EUR 35 million) set the tone for years of enforcement that followed.

GDPR Article 22 and Automated Decision-Making

The AI Act does not operate in isolation. For ecommerce businesses, it overlaps significantly with GDPR, particularly Article 22, which restricts fully automated decisions that produce legal or similarly significant effects on individuals.

Under GDPR Article 22, organizations must:

- Provide meaningful information about the logic involved in automated decisions

- Enable individuals to contest automated decisions and request human intervention

- Establish a valid legal basis for each stage of data processing (consent, legitimate interest, or contractual necessity)

- Conduct Data Protection Impact Assessments (DPIAs) before deploying high-risk automated processing

- Maintain durable, searchable records of agent plans, tool calls executed, data categories observed, and data destinations

The dual obligations of GDPR and the AI Act create a complex compliance landscape. A recommendation engine, for example, must satisfy GDPR transparency requirements (Articles 13 and 14), GDPR automated decision-making restrictions (Article 22), and AI Act high-risk system documentation and oversight mandates simultaneously.

The UK Information Commissioner’s Office published a “Tech Futures” report in January 2026 with specific guidance on agentic AI, recommending that organizations assess and define purposes at each processing stage, avoid processing personal data speculatively, enable users to select which tools and databases the AI can access, and consider requiring human approval before accessing personal information.

Brazil’s LGPD and AI Bill 2338/2023

Brazil’s data protection and AI regulatory environment is particularly relevant for companies operating WhatsApp commerce or serving Brazilian consumers.

LGPD enforcement is already aggressive. The ANPD (Brazil’s data protection authority) levied over EUR 12 million in fines in Q1 2025 alone, including actions against social media companies for using personal data in AI training without proper consent. Brazil’s breach notification requirements are notably faster than GDPR’s 30-day window or CCPA’s 45-day timeline, mandating prompt notification without a specific statutory period.

Bill 2338/2023, currently before the Chamber of Deputies, would establish comprehensive national AI rules including:

- Right to explanation for automated decisions that significantly affect individuals

- Right to non-discrimination in automated processing

- Right to human review of automated decisions

- Classification of AI systems by risk level, similar to the EU approach

The ANPD’s regulatory agenda for 2025-2026 explicitly prioritizes AI and high-risk data processing. Organizations operating in Brazil should treat this legislation as likely to pass in some form and begin preparing for compliance requirements that mirror, and in some cases exceed, the EU framework.

The US Patchwork: Colorado, Texas, and FTC Positioning

The United States lacks a federal AI law. Instead, a patchwork of state legislation and federal enforcement actions creates an uneven but increasingly consequential regulatory landscape.

Colorado AI Act (Effective June 30, 2026)

The Colorado AI Act is the most comprehensive state-level AI consumer protection law enacted to date. It requires both developers and deployers of high-risk AI systems to use “reasonable care” to protect consumers from risks of algorithmic discrimination. For ecommerce, this means AI systems that influence pricing, credit decisions, or product availability based on consumer profiling must be auditable and defensible.

Texas TRAIGA and Other State Laws

Multiple state AI laws took effect on January 1, 2026, with Texas’s Responsible AI Governance Act (TRAIGA) among the most significant. However, a December 2025 Executive Order titled “Ensuring a National Policy Framework for AI” proposes federal preemption of inconsistent state laws, creating uncertainty about whether state requirements will survive.

FTC Positioning

The FTC has signaled a reduced appetite for AI-specific rulemaking. In January 2026, the Bureau of Consumer Protection Director stated there is “no appetite for anything AI-related” in the rulemaking pipeline. However, the FTC maintains that Section 5 of the FTC Act (prohibiting unfair or deceptive acts) applies fully to AI-powered commerce. The approach is enforcement-driven: targeting bad actors rather than regulating the technology itself.

The practical implication for merchants: the absence of federal AI rules does not mean the absence of federal liability. The FTC has emphasized that organizations face liability for unfair practices even when relying on third-party AI models.

Industry Self-Regulation Leading the Way

With regulation lagging behind adoption, the payment card networks and major technology platforms are effectively filling the regulatory vacuum with technical standards that may become de facto compliance requirements.

Mastercard Agent Pay and Verifiable Intent

Mastercard’s Agent Pay framework, launched in 2025, establishes standards for agent verification and secure data exchange using its existing tokenization technology. Agentic Tokens encode the cardholder’s identity, the agent’s identity, and the scope of authorized actions into a single cryptographic token.

In March 2026, Mastercard and Google jointly introduced Verifiable Intent, an open-source, standards-based trust layer built on FIDO Alliance, EMVCo, IETF, and W3C standards. It creates tamper-resistant records of user authorization and uses selective disclosure to share only the minimum information needed with each transaction party. Partners include Fiserv, IBM, Checkout.com, Basis Theory, and Getnet.

Visa Trusted Agent Protocol

Visa’s Trusted Agent Protocol, launched in October 2025, uses cryptographic signatures to authenticate AI agents and distinguish them from bots. Built with Cloudflare, Adyen, Shopify, Stripe, and Microsoft on the Web Bot Auth standard, it requires agents to register public keys in a Visa-managed directory before initiating transactions. Over 100 ecosystem partners are involved worldwide.

Google Universal Commerce Protocol

Launched in January 2026, Google’s Universal Commerce Protocol requires cryptographic proof of user consent for each transaction, with machine-readable privacy policies that enable agents to automatically evaluate compatibility with user preferences.

NIST and Cloud Security Alliance

NIST announced its AI Agent Standards Initiative in February 2026, focusing on agent security, identity, and interoperability. The Cloud Security Alliance published an Agentic Trust Framework the same month, applying Zero Trust principles to AI agents: never trust an agent by default, always verify identity, scope, and intent.

These industry standards are converging around common principles: cryptographic agent identity, scoped authorization, verifiable consumer intent, and auditable decision trails. Organizations that align with these frameworks now will be better positioned when regulations formalize similar requirements.

Compliance Checklist for Agentic Commerce

Immediate Actions (Now)

- [ ] Classify your AI systems under the EU AI Act risk framework. Determine which systems qualify as high-risk.

- [ ] Audit data processing stages. Ensure each stage has a valid GDPR legal basis (consent, legitimate interest, or contractual necessity).

- [ ] Implement AI identity disclosure. Users must know when they are interacting with an AI system, not a human.

- [ ] Deploy consent documentation. Implement and store cryptographic or verifiable proof of user authorization per transaction.

- [ ] Enforce data minimization. Process only data strictly necessary for the transaction. Let users control which data sources agents can access.

- [ ] Update contracts. Review vendor agreements, terms and conditions, and insurance policies for AI-agent-specific liability clauses. Standard agreements were not drafted for autonomous purchasing scenarios.

- [ ] Build audit trails. Log agent decisions, tool calls, data accessed, reasoning processes, and outcomes with sufficient granularity for regulatory review and dispute resolution.

Before August 2, 2026 (EU AI Act High-Risk Deadline)

- [ ] Complete risk assessments. Prepare the technical documentation and conformity assessments required for high-risk AI systems.

- [ ] Implement human oversight mechanisms. Establish human-in-the-loop processes for significant purchasing decisions, particularly for AI-initiated transactions above defined thresholds.

- [ ] Conduct Data Protection Impact Assessments (DPIAs). Required under GDPR for high-risk automated processing and complementary to AI Act obligations.

- [ ] Prepare transparency disclosures. Document and publish how your AI systems make decisions, what data they process, and how consumers can contest automated decisions.

- [ ] Test data subject rights automation. Ensure your systems can handle access, deletion, portability, explanation, and human review requests at the speed regulations require (30 days for GDPR, prompt for LGPD, 45 days for CCPA).

Ongoing Obligations

- [ ] Monitor payment network standards. Align with Mastercard Agent Pay, Visa Trusted Agent Protocol, and Google Universal Commerce Protocol as they mature.

- [ ] Track Brazil Bill 2338/2023. Prepare for comprehensive AI regulation that includes rights to explanation, non-discrimination, and human review.

- [ ] Monitor US federal preemption. The December 2025 Executive Order may reshape the state-level patchwork significantly.

- [ ] Audit for bias. Regularly test recommendation and pricing algorithms for discriminatory outcomes across demographics.

- [ ] Review insurance coverage. Confirm policies cover AI-agent-related errors, unauthorized transactions, and data breaches.

- [ ] Conduct quarterly regulatory reviews. The landscape is evolving faster than annual compliance cycles can accommodate.

Data Privacy Requirements for Agentic Commerce

AI shopping agents collect significantly more personal data than traditional ecommerce applications. Beyond explicit inputs like preferences and budgets, agents capture behavioral signals, contextual data, and inferred attributes including lifestyle, income bracket, and health conditions derived from shopping patterns. Research from the Future of Privacy Forum (2026) found that some agents capture screenshots of browser windows to populate virtual shopping carts, from which sensitive personal details can be inferred.

Consent Cascading

Traditional notice-and-consent models were designed for direct human-to-website interactions. Agentic commerce introduces novel challenges:

- Delegation scope ambiguity. When a user says “find me a good deal on running shoes,” does that authorize the agent to share shoe size, foot health data, and running frequency with every merchant it queries?

- Cascading consent. An agent may interact with sub-agents or third-party services, each requiring separate data processing consent under GDPR.

- Temporal disconnect. The user grants consent once, but the agent operates continuously in contexts the user did not foresee.

Purpose Limitation

GDPR and LGPD both require specific, defined purposes for data processing. The ICO’s January 2026 guidance recommends assessing and defining purposes at each processing stage, not just at initial data collection. Organizations must avoid processing personal data speculatively (“just in case” it may be useful), a practice common in AI training pipelines but incompatible with data minimization requirements.

Cross-Border Data Flows

AI agents operating across merchants in multiple jurisdictions create cross-border data transfer obligations. Organizations must map data flows and ensure adequate transfer mechanisms are in place, including Standard Contractual Clauses (SCCs) for EU-to-third-country transfers, LGPD-compliant transfer mechanisms for Brazilian consumer data, and state-specific requirements for US consumers.

Frequently Asked Questions

Does the EU AI Act apply to my business if I am based outside the EU?

Yes. The AI Act applies to any organization that places AI systems on the EU market or whose AI systems affect individuals located in the EU, regardless of where the organization is headquartered. This mirrors the extraterritorial reach of GDPR. If your ecommerce platform serves EU consumers, you are within scope.

How do I determine if my AI system is “high-risk” under the AI Act?

The Act defines high-risk systems in Annex III, which includes AI used in employment, creditworthiness assessment, access to essential services, and law enforcement. Ecommerce AI systems are not explicitly listed, but systems that influence financial decisions (such as BNPL scoring or dynamic pricing based on consumer profiling), handle biometric data, or make determinations that significantly affect individuals are likely to be classified as high-risk by regulators. When classification is ambiguous, conduct a conformity assessment as though the system is high-risk.

What is the relationship between the EU AI Act and GDPR for ecommerce?

The two regulations operate concurrently and create overlapping obligations. GDPR governs how personal data is collected, processed, and stored. The AI Act governs how AI systems that process that data are designed, deployed, and monitored. A single recommendation engine must comply with both: GDPR’s transparency and automated decision-making requirements, and the AI Act’s documentation, risk assessment, and human oversight mandates. Neither regulation exempts compliance with the other.

What is “Verifiable Intent” and why does it matter for compliance?

Verifiable Intent is an open-source standard introduced by Mastercard and Google in March 2026. It creates a tamper-resistant, cryptographically signed record of three things: the consumer authorizing the AI agent, the consumer’s specific instructions, and the resulting transaction. For compliance purposes, Verifiable Intent provides the auditable consent trail that GDPR Article 22 and the AI Act’s transparency requirements demand. It also strengthens dispute resolution by documenting exactly what the consumer authorized versus what the agent executed.

How should my organization prepare for Brazil’s AI Bill 2338/2023?

While the bill has not yet passed, the ANPD is already actively regulating AI-related data processing through the existing LGPD framework. Organizations should implement rights to explanation for automated decisions, human review mechanisms, non-discrimination testing for algorithms, and prompt breach notification procedures. These measures satisfy current LGPD requirements and will position your organization for compliance if the AI Bill passes.

What happens if an AI agent makes an unauthorized purchase? Who is liable?

Under most existing legal frameworks, the deploying organization bears primary liability. Courts and regulators treat the business as the party best positioned to prevent harm. The EU’s revised Product Liability Directive extends strict liability to software and AI, meaning a defective AI agent is treated like a defective physical product, with liability falling on the developer or producer regardless of fault. Contract updates are urgent: standard terms and conditions, vendor agreements, and insurance policies need explicit clauses covering unauthorized AI-initiated purchases, agent errors, and agent-to-agent transactions.

Is the Colorado AI Act relevant to ecommerce businesses outside Colorado?

Yes, if your AI systems affect Colorado consumers. The Colorado AI Act, effective June 30, 2026, requires developers and deployers of high-risk AI systems to use reasonable care to protect consumers from algorithmic discrimination. Like GDPR and the EU AI Act, it applies based on where the affected individual is located, not where the business is headquartered.

The Bottom Line

The regulatory landscape for AI in ecommerce is no longer hypothetical. The EU AI Act’s high-risk rules take effect on August 2, 2026. Brazil’s LGPD enforcement is already aggressive. The Colorado AI Act follows on June 30, 2026. The first EUR 35 million fine has been issued.

Organizations that treat compliance as a future project rather than a current priority face material financial and operational risk. The checklist above is not exhaustive, but it provides a concrete starting point. Begin with risk classification, audit your data processing pipelines, implement human oversight, and align with the industry standards emerging from Mastercard, Visa, and Google.

The companies that build compliance into their AI architectures now will not only avoid penalties. They will earn the trust that regulators, payment networks, and consumers are all demanding.

Hexagon Team

Published March 8, 2026