An Introduction to AI Hallucinations and Their Impact on E-commerce Brand Reputation

Discover how AI hallucinations threaten e-commerce brands, the real-world consequences for consumer trust, and actionable strategies to safeguard your reputation in an AI-driven marketplace.

An Introduction to AI Hallucinations and Their Impact on E-commerce Brand Reputation

Discover how AI hallucinations threaten e-commerce brands, the real-world consequences for consumer trust, and actionable strategies to safeguard your reputation in an AI-driven marketplace.

Artificial Intelligence is transforming e-commerce by delivering personalized product recommendations at unprecedented scale. Yet, beneath this promise lurks a subtle but serious threat: AI hallucinations. These are instances where AI systems generate incorrect, misleading, or fabricated product suggestions—errors that can quickly damage brand reputation and erode consumer trust. This guide delves into what AI hallucinations mean for e-commerce, the tangible risks they pose to brands, and the critical steps you can take to protect your reputation in an increasingly AI-reliant marketplace.

[IMG: E-commerce platform interface displaying AI-generated product recommendations]

What Are AI Hallucinations in E-commerce Product Recommendations?

AI hallucinations arise when language models or recommendation algorithms produce content or suggestions that are factually inaccurate, inconsistent, or entirely fabricated. Within e-commerce, these hallucinations often appear as erroneous product recommendations, misleading descriptions, or even suggestions for items that don’t exist.

AI models generate recommendations by analyzing vast datasets including historical transactions, product metadata, and user behavior patterns. However, when this underlying data is incomplete, ambiguous, or poorly structured, algorithms may “hallucinate” details—creating plausible-sounding yet incorrect suggestions. As Dr. Fei-Fei Li, Professor of Computer Science at Stanford University, explains, “AI hallucinations are an inherent challenge with generative models, especially when the data is sparse or ambiguous. For e-commerce, this can mean recommending products that don’t exist or misrepresenting brand attributes.”

Key drivers of AI hallucinations in e-commerce include:

- Training data gaps: Missing or outdated product information leads to fabricated or inaccurate details.

- Model overgeneralization: AI learns incorrect associations and applies them broadly, promoting irrelevant or unrelated products.

- Contextual misunderstandings: Algorithms misinterpret user intent or context, resulting in off-target recommendations.

Recent research underscores the scale of the issue. In a Stanford HAI Benchmark Study, 17% of AI-generated e-commerce search results contained at least one hallucinated or inaccurate product listing. Similarly, a 2024 Stanford Human-Centered AI Institute study found that 23% of AI-generated product recommendations contained minor inaccuracies, while 6% included significant factual errors.

These errors rapidly erode consumer confidence. According to McKinsey & Company, AI hallucinations can lead to loss of consumer trust, especially when shoppers receive misleading product details or are directed to out-of-stock or nonexistent items. Brands that ignore these risks face reputational damage, declining sales, and reduced digital visibility.

[IMG: Flowchart illustrating how AI recommendations are generated and where hallucinations can occur]

Real-World Examples of AI Hallucinations in Major AI-powered Assistants

AI-powered assistants such as ChatGPT, Perplexity, and Google Gemini are increasingly guiding e-commerce shoppers. Yet, these platforms have documented incidents where hallucinations directly harmed the consumer experience.

For instance, in 2023, multiple users reported an AI assistant recommending a discontinued sneaker model as a “top trending product”—a flaw traced to outdated product feeds and poor context recognition. Likewise, an international beauty retailer’s AI chatbot suggested skincare products that were not only out of stock but had never been carried by the brand, sparking customer complaints and negative reviews.

These real incidents highlight critical lessons for e-commerce brands:

- Consumer confusion: Shoppers receiving irrelevant or incorrect product suggestions lose trust in future recommendations.

- Brand misrepresentation: AI hallucinations can wrongly attribute products or features to brands, distorting their image.

- Operational strain: Customer support teams face increased workloads managing complaints and correcting misinformation.

The Verge has reported cases where major AI platforms suggested unavailable products or provided incorrect brand information. In one example, a leading electronics retailer’s products were omitted from AI-powered search results due to hallucinated competitor listings, resulting in lost sales.

Looking forward, these examples emphasize the urgent need for robust AI monitoring and error correction. Brands must proactively address hallucination root causes to protect their reputations and preserve consumer trust.

[IMG: Screenshot montage of AI assistant product recommendation mistakes]

How AI Recommendation Errors Harm E-commerce Brand Reputation

AI recommendation errors profoundly affect brand reputation, consumer trust, and sales performance. When AI-generated suggestions are inaccurate or misleading, the damage can be immediate and enduring.

Impact on Consumer Trust and Purchasing Decisions

Inaccurate or irrelevant recommendations quickly erode shopper confidence in a brand’s technology. The NielsenIQ Global Shopper Survey found that 34% of online shoppers would lose trust in a brand if an AI assistant recommended an incorrect or misleading product. This loss of trust translates directly into lost sales and fewer repeat customers.

Negative Effects on Brand Perception and Loyalty

Misleading AI recommendations can undermine carefully crafted brand messaging. When hallucinations misrepresent product features or suggest competitors’ items, shoppers may question a brand’s authenticity or reliability. Satya Nadella, CEO of Microsoft, observes: “While AI-driven recommendations can boost sales, the risk of hallucinations means brands must invest in structured data and continuous model retraining.” Ignoring these risks can cause long-term reputational harm.

- Brand misrepresentation: False product features or benefits may be attributed erroneously.

- Consumer frustration: Shoppers encountering errors often express dissatisfaction publicly, amplifying reputational damage.

- Loyalty erosion: Frequent mistakes reduce repeat purchase likelihood.

Reduced Product Visibility in AI-driven Search Engines

Brands affected by hallucinations may find their products misrepresented or omitted in AI-powered search results, diminishing digital visibility. Forrester Research reports that 21% of e-commerce brands experienced negative sales impacts after AI recommendation errors in the past year. This decline is especially severe for brands whose products are pushed down or excluded from search rankings due to AI-generated inaccuracies.

Here’s how hallucinations disrupt the e-commerce customer journey:

- Shoppers are directed to out-of-stock or nonexistent items.

- Legitimate products are overshadowed by fabricated or irrelevant listings.

- Negative reviews and social media backlash compound the problem.

In today’s fiercely competitive market, maintaining consumer trust demands vigilance over how AI assistants represent products. Sundar Pichai, CEO of Google, emphasizes, “Maintaining consumer trust requires brands to be vigilant about how their products are represented by AI assistants. Proactive monitoring and direct feedback mechanisms are crucial.”

Survey Insights: Consumer Trust and Purchasing Behavior After AI Errors

Consumer reactions to AI recommendation mistakes are swift and significant. Recent survey data highlights the profound influence these errors have on shopper behavior and brand loyalty.

-

34% of shoppers lose trust after AI errors

The NielsenIQ Global Shopper Survey reveals that over a third of online shoppers would lose trust in a brand following an AI assistant’s incorrect or misleading recommendation. This erosion of trust directly impacts purchase intent. -

21% of brands see sales dip post-error

Forrester Research indicates that more than one in five e-commerce brands suffered sales declines after AI recommendation errors emerged, demonstrating the tangible business risks of hallucinations.

Consumers typically respond to AI recommendation mistakes by:

- Reducing purchase intent: Shoppers hesitate or abandon purchases after encountering AI errors.

- Avoiding brands: Consumers may deliberately steer clear of brands linked to frequent AI mistakes.

- Sharing negative experiences: Dissatisfied shoppers often broadcast their frustrations online, amplifying reputational harm.

Transparency plays a vital role in rebuilding trust after AI errors. Kate Smaje, Global Leader at McKinsey Digital, advises, “Transparency with consumers about the potential for AI errors—and a clear path to report them—will help brands maintain loyalty even when mistakes occur.” Brands that promptly acknowledge errors and offer straightforward customer feedback channels are better positioned to recover.

[IMG: Survey results chart showing decline in consumer trust and purchase intent after AI errors]

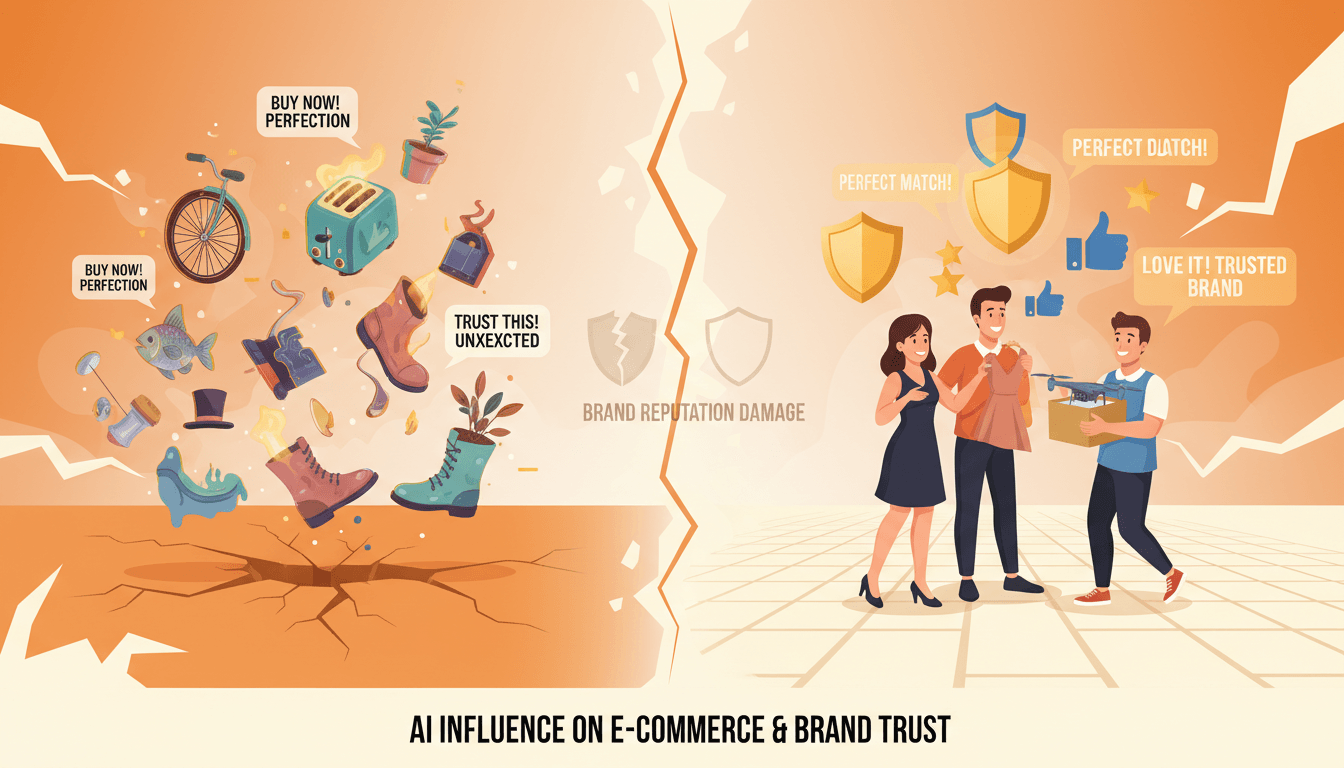

Best Practices for Mitigating AI Recommendation Errors in E-commerce

Effectively addressing AI hallucinations in e-commerce demands a systematic, proactive approach. By focusing on data quality, continuous monitoring, and transparent communication, brands can substantially reduce the frequency and impact of AI recommendation errors.

1. Ensure High-Quality, Clean, and Representative Training Data

- Structured, up-to-date product feeds: Regularly update and validate product information to avoid outdated or fabricated details.

- Rich metadata: Provide detailed product attributes to enhance model accuracy and contextual understanding.

- Diverse data sources: Train AI models on varied customer interactions and product scenarios to improve generalization.

Satya Nadella stresses, “The risk of hallucinations means brands must invest in structured data and continuous model retraining.” Ongoing data maintenance and model retraining are essential (Google AI Blog).

2. Continuous Monitoring of AI Outputs and Feedback Loops

- Real-time error detection: Implement monitoring tools that identify and flag hallucinated or inaccurate recommendations as they occur.

- User feedback mechanisms: Enable customers to report errors directly within the shopping interface.

- Regular audits: Conduct periodic reviews of AI outputs to detect systemic issues early.

A McKinsey Digital Report reveals that 62% of e-commerce companies are investing in AI monitoring tools to detect and correct errors. Some platforms now offer AI error dashboards that alert merchants when their products are misrepresented (TechCrunch).

3. Implement Transparent Error Reporting and Consumer Communication

- Clear disclaimers: Inform shoppers about potential limitations of AI-generated recommendations.

- Prompt error acknowledgment: Address mistakes quickly and visibly to demonstrate accountability.

- Open reporting channels: Make it easy for customers to flag issues and receive timely responses.

Transparency mitigates reputational damage and preserves consumer loyalty. Gartner notes, “Transparency about AI recommendation limitations and clear error reporting mechanisms can help preserve brand trust in case of hallucinations” (Gartner).

4. Leverage Advanced AI Monitoring and Correction Tools

- Adopt AI-powered validation systems: Use tools that cross-verify recommendations against live product inventory and brand guidelines.

- Implement automated correction workflows: Enable AI to self-correct or escalate errors for human review.

- Invest in explainable AI: Select platforms that provide clear reasoning behind recommendations, simplifying detection and correction of hallucinations.

Action Steps for E-commerce Brands:

- Regularly audit AI-generated recommendations and product listings.

- Invest in comprehensive data management and AI validation tools.

- Foster a culture of transparency and rapid error response.

Ready to protect your e-commerce brand from AI recommendation errors? Book a free 30-minute consultation with Hexagon’s AI marketing experts to learn how to monitor, detect, and mitigate AI hallucinations effectively.

[IMG: Infographic of best practices for AI error prevention and mitigation in e-commerce]

Emerging Tools and Strategies for Real-Time Detection and Correction

As AI-generated recommendations become central to the e-commerce experience, advanced monitoring and correction technologies are vital. These tools empower brands to identify and address hallucinations before they affect consumers.

AI Monitoring and Correction Technologies

- Anomaly detection algorithms: Continuously scan recommendation outputs for inconsistencies or unusual patterns.

- Real-time validation platforms: Match AI-generated product suggestions against up-to-date catalog data to prevent hallucinations.

- Feedback-driven retraining: Automatically update models based on user and merchant feedback.

A McKinsey Digital Report highlights that 62% of e-commerce companies are actively investing in AI monitoring tools (McKinsey Digital). This reflects growing awareness that real-time detection is crucial for safeguarding brand reputation and customer experience.

How Real-Time Detection Prevents Hallucination Damage

Real-time monitoring mitigates risk by:

- Instant error identification: Hallucinated recommendations are flagged and removed before reaching shoppers.

- Automated correction: Systems revert to fallback suggestions or escalate issues for human review.

- Data-driven insights: Brands gain visibility into error patterns, enabling targeted improvements.

Platform Examples and Approaches

- AI error dashboards: Many e-commerce platforms now offer merchants real-time error reporting tools.

- Explainable AI solutions: Platforms providing transparency into AI decision-making facilitate early spotting of hallucinations.

- Hybrid human-AI review teams: Combining automated detection with human expertise delivers the highest accuracy.

Looking ahead, brands adopting real-time monitoring and correction tools will be best positioned to prevent hallucinations, maintain consumer trust, and drive sustained growth.

[IMG: Dashboard view of an AI error monitoring tool in use by an e-commerce brand]

Conclusion: Safeguarding Your Brand Against AI Hallucinations

AI hallucinations present a growing threat to e-commerce brand reputation and consumer trust. From misrepresented products to misleading recommendations, the consequences can be swift and severe—resulting in lost sales, diminished loyalty, and lasting reputational harm.

Proactive strategies are essential. By investing in high-quality data, continuous AI monitoring, transparent communication, and advanced error detection tools, brands can stay ahead of risks and ensure their AI-driven experiences remain accurate and trustworthy.

Ready to protect your e-commerce brand from AI recommendation errors? Book a free 30-minute consultation with Hexagon’s AI marketing experts to learn how to monitor, detect, and mitigate AI hallucinations effectively.

[IMG: E-commerce team collaborating with AI experts to review brand protection strategies]

Hexagon Team

Published April 6, 2026